Intel Launches Cooper Lake: 3rd Generation Xeon Scalable for 4P/8P Servers

by Dr. Ian Cutress on June 18, 2020 9:00 AM ESTPerformance and Deployments

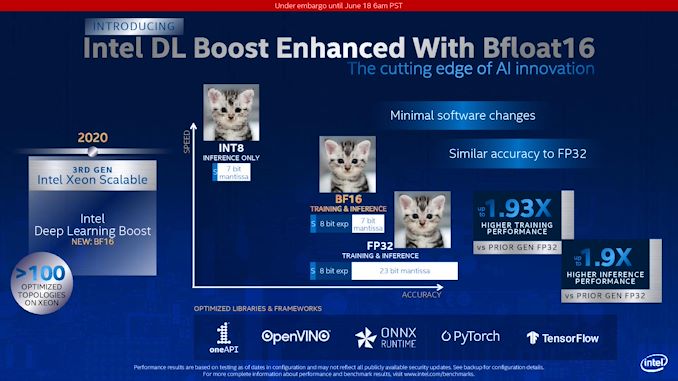

As part of the discussion points, Intel stated that it has integrated its BF16 support into its usual array of supported frameworks and utilities that it normally defines as ‘Intel DL Boost’. This includes PyTorch, TensorFlow, OneAPI, OpenVino, and ONNX. We had a discussion with Wei Li, who heads up Intel’s AI Software Group at Intel, who confirmed to us that all these libraries have already been updated for use with BF16. For the high level programmers, these libraries will accept FP32 data and do the data conversion automatically to BF16, however the functions will still require an indication to use BF16 over INT8 or something similar.

When speaking with Wei Li, he confirmed that all the major CSPs who have taken delivery of Cooper Lake are already porting workloads onto BF16, and have been for quite some time. That isn’t to say that BF16 is suitable for every workload, but it provides a balance between the accuracy of FP32 and the computational speed of FP16. As noted in the slide above, over FP32, BF16 implementations are achieving up to ~1.9x speedups on both training and inference with Intel’s various CSP customers.

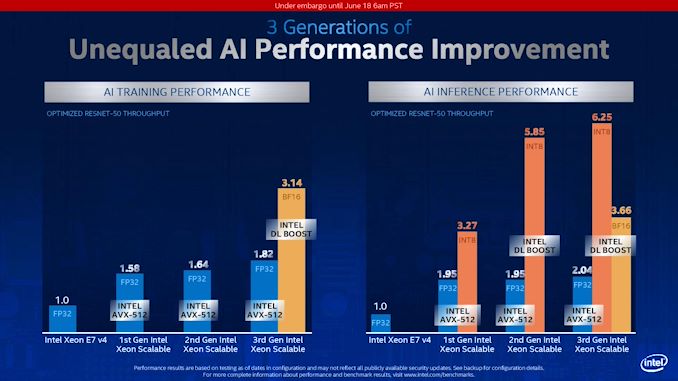

Normally we don’t post too many graphs of first party performance numbers, however I did want to add this one.

Here we see Intel’s BF16 DL Boost at work for Resnet-50 in both training and inference. Resnet-50 is an old training set at this point, but is still used as a reference point for performance given its limited scope in layers and convolutions. Here Intel is showing a 72% increase in performance with Cooper Lake in BF16 mode vs Cooper Lake in FP32 mode when training the dataset.

Inference is a bit different, because inference can take advantage of lower bit, high bandwidth data casting, such as INT8, INT4, and such. Here we see BF16 still giving 1.8x performance over normal FP32 AVX512, but INT8 has that throughput advantage. This is a balance of speed and accuracy.

It should be noted that this graph also includes software optimizations over time, not only raw performance of the same code across multiple platforms.

I would like to point out the standard FP32 performance generation on generation. For AI Training, Intel is showing a 1.82/1.64 = 11% gain, while for inference we see a 2.04/1.95 = 4.6 % gain in performance generation-on-generation. Given that Cooper uses the same cores underneath as Cascade, this is mostly due to core frequency increases as well as bandwidth increases.

Deployments

A number of companies reached out to us in advance of the launch to tell us about their systems.

Lenovo will be announcing the launch of its ThinkSystem SR860 V2 and SR850 V2 servers with Cooper Lake and Optane DCPMM. The SR860 V2 will support up to four double-wide 300W GPUs in a dual socket configuration.

The fact that Lenovo is offering 2P variants of Cooper Lake is quite puzzling, especially as Intel said these were aimed at 4P systems and up. Hopefully we can get one in for testing.

Also, GIGABYTE is announcing its R292-4S0 and R292-4S1 servers, both quad socket.

One of Intel’s partners stated to us that they were not expecting Cooper Lake to launch so soon – even within the next quarter. As a result, they were caught off guard and had to scramble to get materials for this announcement. It would appear that Intel had a need to pull in this announcement to now, perhaps because one of the major CSPs is ready to announce.

99 Comments

View All Comments

Zizy - Thursday, June 18, 2020 - link

BF16 maintains range, not accuracy.ABR - Sunday, June 21, 2020 - link

Yes, what the article SHOULD have said, rather than spending a paragraph dancing around it and then finally giving the table.SarahKerrigan - Thursday, June 18, 2020 - link

So in other words: "one platform from 1s to 8s" is dead and Xeon-EX is back. Okay then, Intel.Deicidium369 - Thursday, June 18, 2020 - link

Same platform - Whitley. Segmentation between 1-2S and 4-8S - the customers for the 4 to 8 socket Cooper Lake are quite different from the 1 to 2 socket Ice Lake SP.Ice Lake SP is general purpose, Cooper Lake is geared more towards AI and Hyperscalers.

SarahKerrigan - Thursday, June 18, 2020 - link

Are you sure? The leaked roadmaps a few months ago say that only the mainstream Cooper MCMs (since cancelled) are on Whitley, and the 4-8s Coopers are on their own platform, Cedar Island.https://cdn.wccftech.com/wp-content/uploads/2019/0...

Deicidium369 - Friday, June 19, 2020 - link

You are correct - seems that all Cooper Lakes will be on the Cedar Island platform and not Whitley. They will share a variant of the new socket with Ice Lake SP. It was my understanding that Whitley was split - 2S for ICL SP and 4-8 for the 28C Cooper, with the 56C MCMs being on CedarIf the MCMs were canceled (some have been produced and gone into super computers using 1st Xeon Scalable based systems) then it makes sense for Cooper to be on Cedar Island now.

So I stand corrected on the platform. The markets are still quite different for Cooper and ICL, it seems just the platform changed for Cooper.

Spunjji - Friday, June 19, 2020 - link

Who needs honesty when you have most of the market snugly in your back pocket? 😂YB1064 - Thursday, June 18, 2020 - link

Okay, but can it run Crysis?Dragonstongue - Thursday, June 18, 2020 - link

better question, does it smoke any AMD products such as the Threadripper 3xxx or EPYC 3rd gen?I didnt think so, not sure why Intel is charging such a high price per core etc

SarahKerrigan - Thursday, June 18, 2020 - link

Epyc 3rd gen doesn't exist today, and 4/8s x86 is mostly a specialized high-end market (much of it driven by replacement of RISC/UNIX systems) where AMD doesn't play. Whether the pricing is justified is obviously open for debate, but there's historical precedent for higher costs for 8s-capable parts.