A Broadwell Retrospective Review in 2020: Is eDRAM Still Worth It?

by Dr. Ian Cutress on November 2, 2020 11:00 AM ESTCPU Tests: Office and Science

Our previous set of ‘office’ benchmarks have often been a mix of science and synthetics, so this time we wanted to keep our office section purely on real world performance.

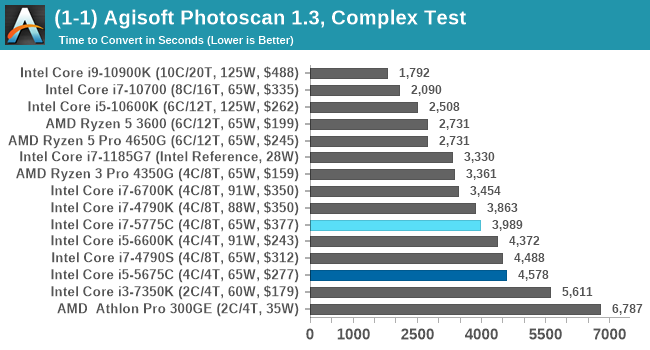

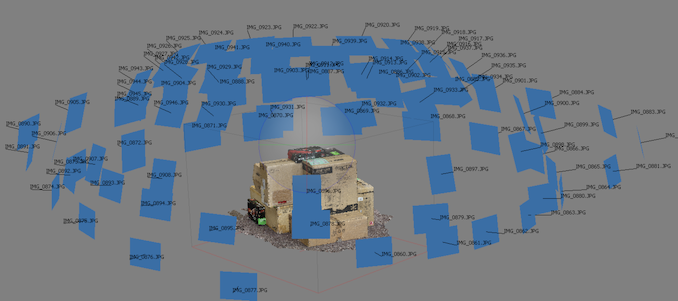

Agisoft Photoscan 1.3.3: link

The concept of Photoscan is about translating many 2D images into a 3D model - so the more detailed the images, and the more you have, the better the final 3D model in both spatial accuracy and texturing accuracy. The algorithm has four stages, with some parts of the stages being single-threaded and others multi-threaded, along with some cache/memory dependency in there as well. For some of the more variable threaded workload, features such as Speed Shift and XFR will be able to take advantage of CPU stalls or downtime, giving sizeable speedups on newer microarchitectures.

For the update to version 1.3.3, the Agisoft software now supports command line operation. Agisoft provided us with a set of new images for this version of the test, and a python script to run it. We’ve modified the script slightly by changing some quality settings for the sake of the benchmark suite length, as well as adjusting how the final timing data is recorded. The python script dumps the results file in the format of our choosing. For our test we obtain the time for each stage of the benchmark, as well as the overall time.

The extra power budget of the Devil's Canyon pulls ahead of the Core i7-5775C.

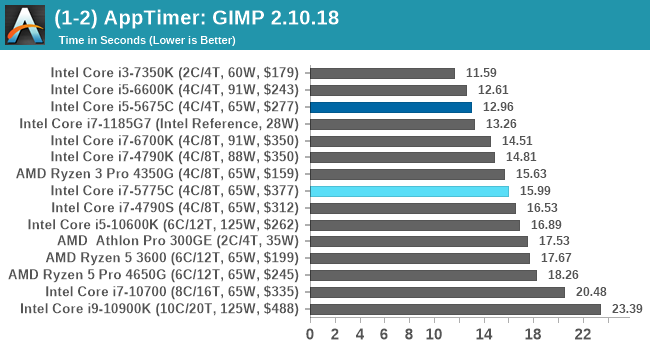

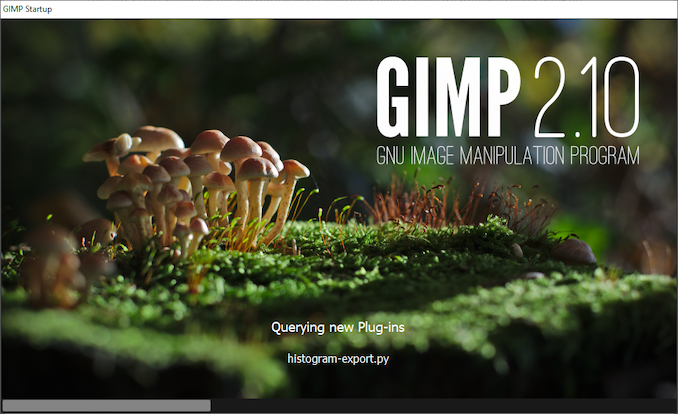

Application Opening: GIMP 2.10.18

First up is a test using a monstrous multi-layered xcf file to load GIMP. While the file is only a single ‘image’, it has so many high-quality layers embedded it was taking north of 15 seconds to open and to gain control on the mid-range notebook I was using at the time.

What we test here is the first run - normally on the first time a user loads the GIMP package from a fresh install, the system has to configure a few dozen files that remain optimized on subsequent opening. For our test we delete those configured optimized files in order to force a ‘fresh load’ each time the software in run.

We measure the time taken from calling the software to be opened, and until the software hands itself back over to the OS for user control. The test is repeated for a minimum of ten minutes or at least 15 loops, whichever comes first, with the first three results discarded.

GIMP does optimizations for every CPU thread in the system, which requires that higher thread-count processors take a lot longer to run.

Science

In this version of our test suite, all the science focused tests that aren’t ‘simulation’ work are now in our science section. This includes Brownian Motion, calculating digits of Pi, molecular dynamics, and for the first time, we’re trialing an artificial intelligence benchmark, both inference and training, that works under Windows using python and TensorFlow. Where possible these benchmarks have been optimized with the latest in vector instructions, except for the AI test – we were told that while it uses Intel’s Math Kernel Libraries, they’re optimized more for Linux than for Windows, and so it gives an interesting result when unoptimized software is used.

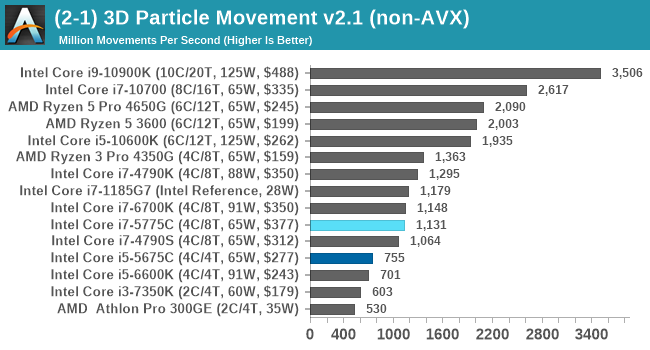

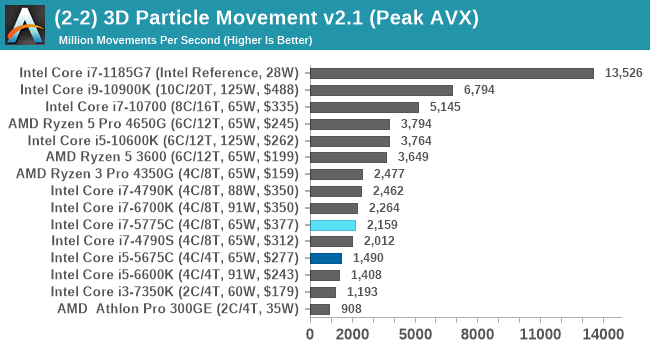

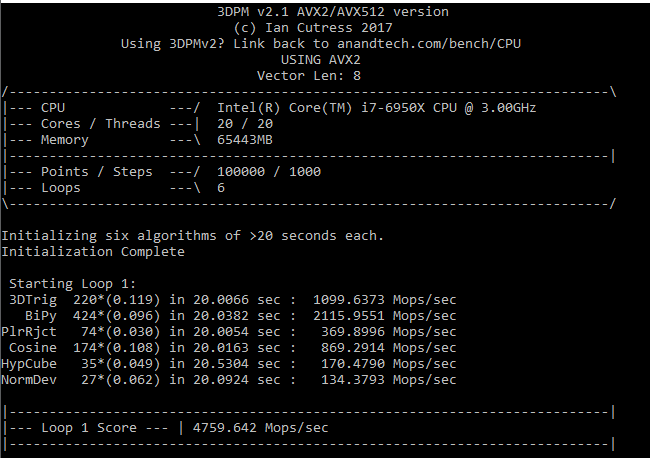

3D Particle Movement v2.1: Non-AVX and AVX2/AVX512

This is the latest version of this benchmark designed to simulate semi-optimized scientific algorithms taken directly from my doctorate thesis. This involves randomly moving particles in a 3D space using a set of algorithms that define random movement. Version 2.1 improves over 2.0 by passing the main particle structs by reference rather than by value, and decreasing the amount of double->float->double recasts the compiler was adding in.

The initial version of v2.1 is a custom C++ binary of my own code, and flags are in place to allow for multiple loops of the code with a custom benchmark length. By default this version runs six times and outputs the average score to the console, which we capture with a redirection operator that writes to file.

For v2.1, we also have a fully optimized AVX2/AVX512 version, which uses intrinsics to get the best performance out of the software. This was done by a former Intel AVX-512 engineer who now works elsewhere. According to Jim Keller, there are only a couple dozen or so people who understand how to extract the best performance out of a CPU, and this guy is one of them. To keep things honest, AMD also has a copy of the code, but has not proposed any changes.

The 3DPM test is set to output millions of movements per second, rather than time to complete a fixed number of movements.

3DPM isn't memory limited, and as a result we see a relative natural order of performance.

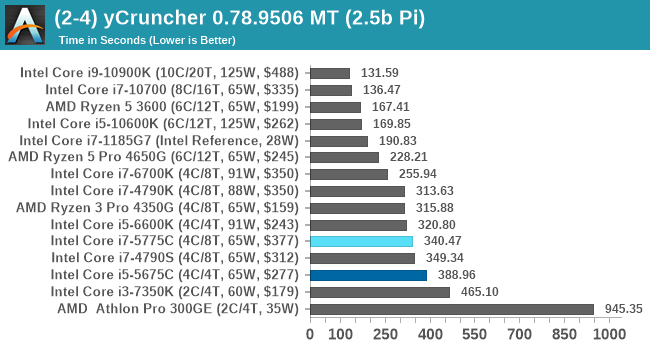

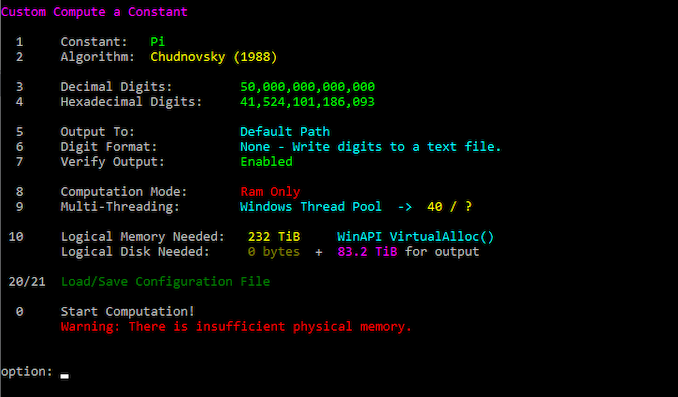

y-Cruncher 0.78.9506: www.numberworld.org/y-cruncher

If you ask anyone what sort of computer holds the world record for calculating the most digits of pi, I can guarantee that a good portion of those answers might point to some colossus super computer built into a mountain by a super-villain. Fortunately nothing could be further from the truth – the computer with the record is a quad socket Ivy Bridge server with 300 TB of storage. The software that was run to get that was y-cruncher.

Built by Alex Yee over the last part of a decade and some more, y-Cruncher is the software of choice for calculating billions and trillions of digits of the most popular mathematical constants. The software has held the world record for Pi since August 2010, and has broken the record a total of 7 times since. It also holds records for e, the Golden Ratio, and others. According to Alex, the program runs around 500,000 lines of code, and he has multiple binaries each optimized for different families of processors, such as Zen, Ice Lake, Sky Lake, all the way back to Nehalem, using the latest SSE/AVX2/AVX512 instructions where they fit in, and then further optimized for how each core is built.

For our purposes, we’re calculating Pi, as it is more compute bound than memory bound. In single thread mode we calculate 250 million digits, while in multithreaded mode we go for 2.5 billion digits. That 2.5 billion digit value requires ~12 GB of DRAM, and so is limited to systems with at least 16 GB.

Despite being a more memory driven benchmark, y-Cruncher here follows a more traditional performance order.

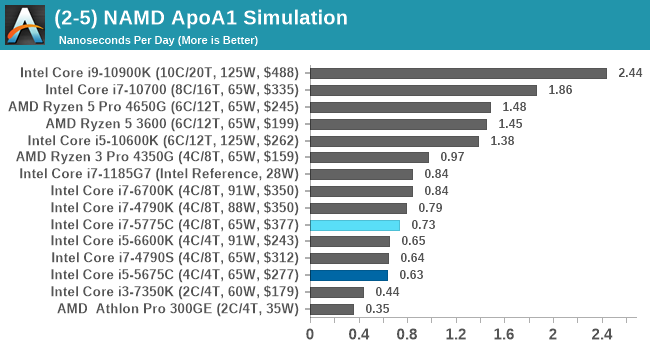

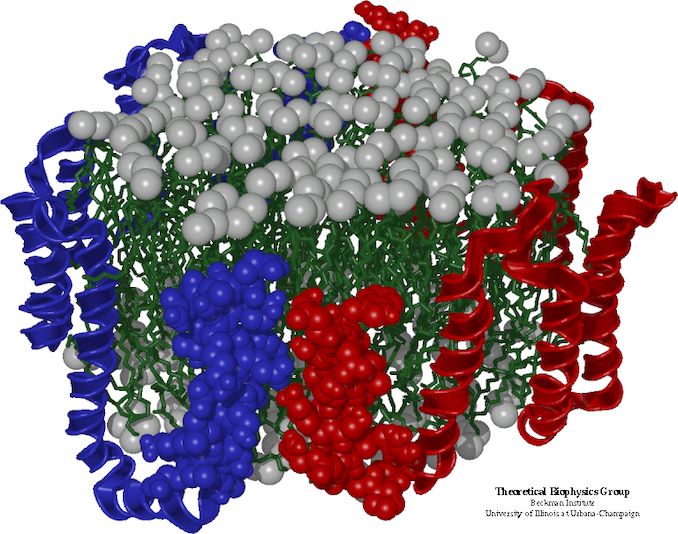

NAMD 2.13 (ApoA1): Molecular Dynamics

One of the popular science fields is modeling the dynamics of proteins. By looking at how the energy of active sites within a large protein structure over time, scientists behind the research can calculate required activation energies for potential interactions. This becomes very important in drug discovery. Molecular dynamics also plays a large role in protein folding, and in understanding what happens when proteins misfold, and what can be done to prevent it. Two of the most popular molecular dynamics packages in use today are NAMD and GROMACS.

NAMD, or Nanoscale Molecular Dynamics, has already been used in extensive Coronavirus research on the Frontier supercomputer. Typical simulations using the package are measured in how many nanoseconds per day can be calculated with the given hardware, and the ApoA1 protein (92,224 atoms) has been the standard model for molecular dynamics simulation.

Luckily the compute can home in on a typical ‘nanoseconds-per-day’ rate after only 60 seconds of simulation, however we stretch that out to 10 minutes to take a more sustained value, as by that time most turbo limits should be surpassed. The simulation itself works with 2 femtosecond timesteps. We use version 2.13 as this was the recommended version at the time of integrating this benchmark into our suite. The latest nightly builds we’re aware have started to enable support for AVX-512, however due to consistency in our benchmark suite, we are retaining with 2.13. Other software that we test with has AVX-512 acceleration.

Similar to y-Cruncher, the extra DRAM doesn't afford any benefits for NAMD on this scale. The Devil's Canyon Core i7-4790K is still ahead of the Broadwell i7.

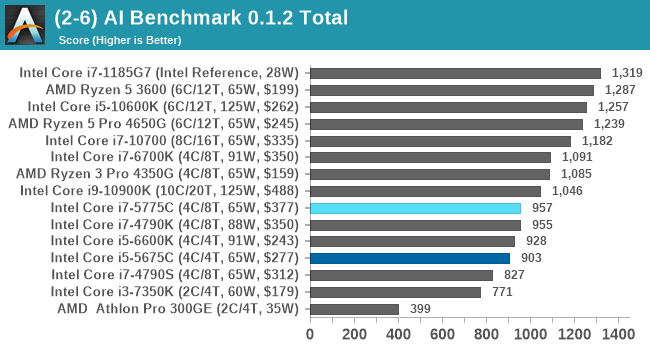

AI Benchmark 0.1.2 using TensorFlow: Link

Finding an appropriate artificial intelligence benchmark for Windows has been a holy grail of mine for quite a while. The problem is that AI is such a fast moving, fast paced word that whatever I compute this quarter will no longer be relevant in the next, and one of the key metrics in this benchmarking suite is being able to keep data over a long period of time. We’ve had AI benchmarks on smartphones for a while, given that smartphones are a better target for AI workloads, but it also makes some sense that everything on PC is geared towards Linux as well.

Thankfully however, the good folks over at ETH Zurich in Switzerland have converted their smartphone AI benchmark into something that’s useable in Windows. It uses TensorFlow, and for our benchmark purposes we’ve locked our testing down to TensorFlow 2.10, AI Benchmark 0.1.2, while using Python 3.7.6.

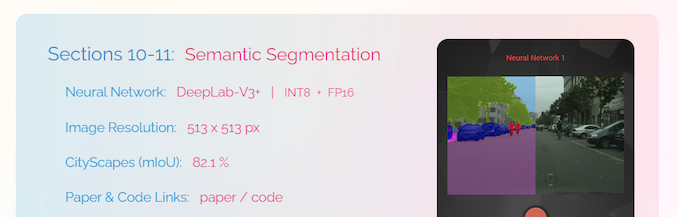

The benchmark runs through 19 different networks including MobileNet-V2, ResNet-V2, VGG-19 Super-Res, NVIDIA-SPADE, PSPNet, DeepLab, Pixel-RNN, and GNMT-Translation. All the tests probe both the inference and the training at various input sizes and batch sizes, except the translation that only does inference. It measures the time taken to do a given amount of work, and spits out a value at the end.

There is one big caveat for all of this, however. Speaking with the folks over at ETH, they use Intel’s Math Kernel Libraries (MKL) for Windows, and they’re seeing some incredible drawbacks. I was told that MKL for Windows doesn’t play well with multiple threads, and as a result any Windows results are going to perform a lot worse than Linux results. On top of that, after a given number of threads (~16), MKL kind of gives up and performance drops of quite substantially.

So why test it at all? Firstly, because we need an AI benchmark, and a bad one is still better than not having one at all. Secondly, if MKL on Windows is the problem, then by publicizing the test, it might just put a boot somewhere for MKL to get fixed. To that end, we’ll stay with the benchmark as long as it remains feasible.

The AI-Benchmark (ETH) doesn't necessarily follow a standard performance candence due to MKL on Windows, but the Broadwell parts both score under 1000 pts here.

120 Comments

View All Comments

krowes - Monday, November 2, 2020 - link

CL22 memory for the Ryzen setup? Makes absolutely no sense.Ian Cutress - Tuesday, November 3, 2020 - link

That's JEDEC standard.Khenglish - Monday, November 2, 2020 - link

Was anyone else bothered by the fact that Intel's highest performing single thread CPU is the 1185G7, which is only accessible in 28W tiny BGA laptops?Also the 128mb edram cache does seem to make on average a 10% improvement over the edramless 4790S at the same TDP. I would love to see edram on more cpus. It's so rare to need more than 8 cores. I'd rather have 8 cores with edram than 16+ cores and no edram.

ichaya - Monday, November 2, 2020 - link

There's definitely a cost trade-off involved, but with an I/O die since Zen 2, it seems like AMD could just spin up a different I/O die, and justify the cost easily by selling to HEDT/Workstation/DC.Notmyusualid - Wednesday, November 4, 2020 - link

Chalk me up as 'bothered'.zodiacfml - Monday, November 2, 2020 - link

Yeah but Intel is about squeezing the last dollar in its products for a couple of years now.Endymio - Monday, November 2, 2020 - link

CPU register-> 3 levels of cache -> eDRAM -> DRAM -> Optane -> SSD -> Hard Drive.The human brain gets by with 2 levels of storage. I really don't feel that computers should require 9. The entire approach needs rethinking.

Tomatotech - Tuesday, November 3, 2020 - link

You remember everything without writing down anything? You remarkable person.The rest of us rely on written materials, textbooks, reference libraries, wikipedia, and the internet to remember stuff. If you jot down all the levels of hierarchical storage available to the average degree-educated person, it's probably somewhere around 9 too depending on how you count it.

Not everything you need to find out is on the internet or in books either. Data storage and retrieval also includes things like having to ask your brother for Aunt Jenny's number so you can ring Aunt Jenny and ask her some detail about early family life, and of course Aunt Jenny will tell you to go and ring Uncle Jonny, but she doesn't have Jonny's number, wait a moment while she asks Max for it and so on.

eastcoast_pete - Tuesday, November 3, 2020 - link

You realize that the closer the cache is to actual processor speed, the more demanding the manufacturing gets and the more die area it eats. That's why there aren't any (consumer) CPUs with 1 or more MB of L1 Cache. Also, as Tomatotech wrote, we humans use mnemonic assists all the time, so the analogy short-term/long-term memory is incomplete. Writing and even drawing was invented to allow for longer-term storage and easier distribution of information. Lastly, at least IMO, it boils down to cost vs. benefit/performance as to how many levels of memory storage are best, and depends on the usage scenario.Oxford Guy - Monday, November 2, 2020 - link

Peter Bright of Ars in 2015:"Intel’s Skylake lineup is robbing us of the performance king we deserve. The one Skylake processor I want is the one that Intel isn't selling.

in games the performance was remarkable. The 65W 3.3-3.7GHz i7-5775C beat the 91W 4-4.2GHz Skylake i7-6700K. The Skylake processor has a higher clock speed, it has a higher power budget, and its improved core means that it executes more instructions per cycle, but that enormous L4 cache meant that the Broadwell could offset its disadvantages and then some. In CPU-bound games such as Project Cars and Civilization: Beyond Earth, the older chip managed to pull ahead of its newer successor.

in memory-intensive workloads, such as some games and scientific applications, the cache is better than 21 percent more clock speed and 40 percent more power. That's the kind of gain that doesn't come along very often in our dismal post-Moore's law world.

Those 5775C results tantalized us with the prospect of a comparable Skylake part. Pair that ginormous cache with Intel's latest-and-greatest core and raise the speed limit on the clock speed by giving it a 90-odd W power envelope, and one can't help but imagine that the result would be a fine processor for gaming and workstations alike. But imagine is all we can do because Intel isn't releasing such a chip. There won't be socketed, desktop-oriented eDRAM parts because, well, who knows why.

Intel could have had a Skylake processor that was exciting to gamers and anyone else with performance-critical workloads. For the right task, that extra memory can do the work of a 20 percent overclock, without running anything out of spec. It would have been the must-have part for enthusiasts everywhere. And I'm tremendously disappointed that the company isn't going to make it."

In addition to Bright's comments I remember Anandtech's article that showed the 5675C beating or equalling the 5775C in one or more gaming tests, apparently largely due to the throttling due to Intel's decision to hobble Broadwell with such a low TDP.