Intel Launches Cooper Lake: 3rd Generation Xeon Scalable for 4P/8P Servers

by Dr. Ian Cutress on June 18, 2020 9:00 AM EST

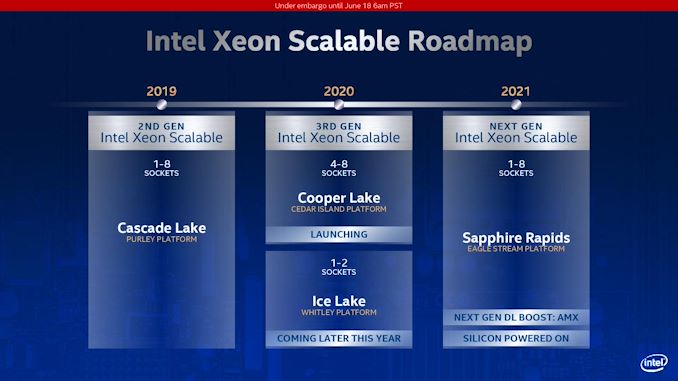

We’ve known about Intel’s Cooper Lake platform for a number of quarters. What was initially planned, as far as we understand, as a custom silicon variant of Cascade Lake for its high-profile customers, it was subsequently productized and aimed to be inserted into a delay in Intel’s roadmap caused by the development of 10nm for Xeon. Set to be a full range update to the product stack, in the last quarter, Intel declared that its Cooper Lake platform would end up solely in the hands of its priority customers, only as a quad-socket or higher platform. Today, Intel launches Cooper Lake, and confirms that Ice Lake is set to come out later this year, aimed at the 1P/2P markets.

Count Your Coopers: BFloat16 Support

Cooper Lake Xeon Scalable is officially designated as Intel’s 3rd Generation of Xeon Scalable for high-socket count servers. Ice Lake Xeon Scalable, when it launches later this year, will also be called 3rd Generation of Xeon Scalable, except for low core count servers.

For Cooper Lake, Intel has made three key additions to the platform. First is the addition of AVX512-based BF16 instructions, allowing users to take advantage of the BF16 number format. A number of key AI workloads, typically done in FP32 or FP16, can now be performed in BF16 to get almost the same throughput as FP16 for almost the same range of FP32. Facebook made a big deal about BF16 in its presentation last year at Hot Chips, where it forms a critical part of its Zion platform. At the time the presentation was made, there was no CPU on the market that supported BF16, which led to this amusing exchange at the conference:

BF16 (bfloat16) is a way of encoding a number in binary that attempts to take advantage of the range of a 32-bit number, but in a 16-bit format such that double the compute can be packed into the same number of bits. The simple table looks a bit like this:

| Data Type Representations | ||||||

| Type | Bits | Exponent | Fraction | Precision | Range | Speed |

| float32 | 32 | 8 | 23 | High | High | Slow |

| float16 | 16 | 5 | 10 | Low | Low | 2x Fast |

| bfloat16 | 16 | 8 | 7 | Lower | High | 2x Fast |

By using BF16 numbers rather than FP32 numbers, it would also mean that memory bandwidth requirements as well as system-to-system network requirements could be halved. On the scale of a Facebook, or an Amazon, or a Tencent, this would appeal to them. At the time of the presentation at Hot Chips last year, Facebook confirmed that it already had silicon working on its datasets.

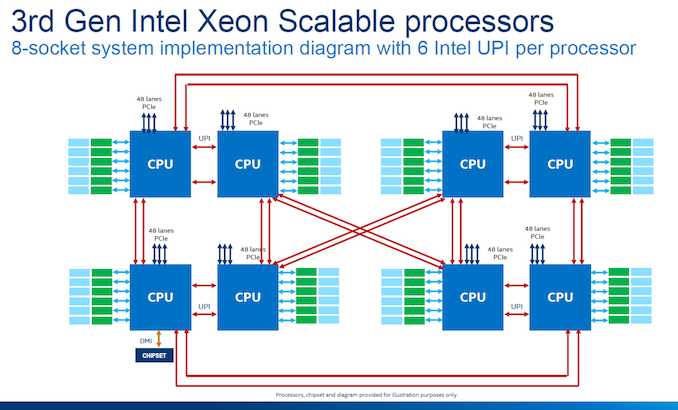

Doubling Socket-to-Socket Interconnect Bandwidth

The second upgrade that Intel has made to Cooper Lake over Cascade Lake is in socket-to-socket interconnect. Traditionally Intel’s Xeon processors have relied on a form of QPI/UPI (Ultra Path Interconnect) in order to connect multiple CPUs together to act as one system. In Cascade Lake Xeon Scalable, the top end processors each had three UPI links running at 10.4 GT/s. For Cooper Lake, we have six UPI links also running at 10.4 GT/s, however these links still only have three controllers behind them such that each CPU can only connect to three other CPUs, but the bandwidth can be doubled.

This means that in Cooper Lake, each CPU-to-CPU connection involves two UPI links, each running at 10.4 GT/s, for a total of 20.8 GT/s. Because the number of links is doubled, rather than an evolution of the standard, there are no power efficiency improvements beyond anything Intel has done to the manufacturing process. Note that double the bandwidth between sockets is still a good thing, even if latency and power per bit is still the same.

Intel still uses the double pinwheel topology for its eight socket designs, ensuring at max two hops to any required processor in the set. Eight socket is the limit with a glueless network – we have already seen companies like Microsoft build servers with 32 sockets using additional glue logic.

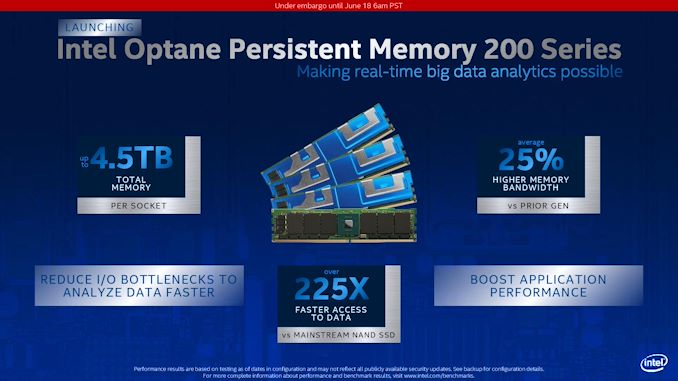

Memory and 2nd Gen Optane

The third upgrade for Cooper Lake is the memory support. Intel is now supporting DDR4-3200 with the Cooper Xeon Platinum parts, however only in a 1 DIMM per channel (1 DPC) scenario. 2 DPC is supported, but only at DDR4-2933. Support for DDR4-3200 technically gives the system a boost from 23.46 GB/s per channel to 25.60 GB/s, an increase of 9.1%.

The base models of Cooper Lake will also be updated to support 1.125 TiB of memory, up from 1 TB. This allows for a 12 DIMM scenario where six modules are 64 GB and six modules are 128 GB. One of the complaints about Cascade Xeons was that in 1 TB mode, it would not allow for an even capacity per memory channel when it was filled with memory, so Intel have rectified this situation. In this scenario, it means that the six 128 GB modules could also be Optane. Why Intel didn’t go for the full 12 * 128 GB scenario, we’ll never know.

The higher memory capacity processors will support 4.5 TB of memory, and be listed as ‘HL’ processors.

Cooper Lake will also support Intel’s second generation 200-series Optane DC Persistent Memory, codenamed Barlow Pass. 200-series Optane DCPMM will still available in 128 GB, 256 GB, and 512 GB modules, same as the first generation, and will also run at the same DDR4-2666 memory speed. Intel claims that this new generation of Optane offers 25% higher memory bandwidth than the previous generation, which we assume comes down to a new generation of Optane controller on the memory and software optimization at the system level.

Intel states that the 25% performance increase is when they compare 1st gen Optane DCPMM to 2nd gen Optane DCPMM at 15 W, both operating at DDR4-2666. Note that the first-gen could operate in different power modes, from 12 W up to 18 W. We asked Intel if the second generation was the same, and they stated that 15 W is the maximum power mode offered in the new generation.

99 Comments

View All Comments

schujj07 - Saturday, June 20, 2020 - link

If you don't care what your CTO thinks, believes, or does for your infrastructure then you are a moron of a CEO. At that point why even have a CTO since you know best about everything. If I were to come to you and state I can save you $500K today on your upgrade all while increasing performance plus more savings on power and cooling you would be an idiot not to listen. However, since you don't want to trust you CTO you will just burn money.Deicidium369 - Thursday, June 25, 2020 - link

Wasn't aware that I hired you as my CTO. I am not the CEO - I am the owner of the business. I hired the CEO and CTO and COO for that matter. What my CTO says matters. What a forum poster on a tech forum says does not matter.Spunjji - Friday, June 19, 2020 - link

Dude, you've done this twice on the same article - waxed lyrical about "how things are", then when someone challenges you, backed out by saying it's just "how things were" or "how I decided to do things ten years ago". If you're going to make informed claims about the present then you need to be using info from the present; if you're not, then they're not really informed in any meaningful sense.Korguz - Friday, June 19, 2020 - link

schujj07, Spunjji, you know damn well he wont ever do that. you call him out on any of his bs and fud, and all he does is resort to name calling, condesending remarks, and insults. his whole attitude is : how dare you call me out ? i know what i am talking about, and its fact, ( even though he rarely, if ever posts proof ) so dont argue with me !!! or runs away and hides.Deicidium369 - Saturday, June 20, 2020 - link

Hey little buddybored? have nothing substantive to say? thinking about me? Living rent free in that low rent run down tenement in your head.

Now do as you mother has asked - and go clean the basement.

Korguz - Saturday, June 20, 2020 - link

hey, has McDonalds hired you back yet ? or are you still layed off cause of covid 19 ?" have nothing substantive to say " oh like you do al the time ? your posts are pure fiction, and BS just like your life. your living rent free at your parents house, so point is ? the way you talk, and ALWAYS resort to insults, name calling and condescending remarks shows your are not what you claim to be. clean the basement ? what for ? when i would just make a brat like you do it.

Deicidium369 - Thursday, June 25, 2020 - link

I am not laid off - I own the businesses. Most of my employees in the largest business I own are idled at the moment - with only about 150 returning to work due to the construction sites in Texas and Florida being reopened. So, yes quite a few of my employees in that business are idled - I don't work for the businesses - I own them. Might be hard for you to understand.Parents are both dead - I am 49yo, married, 3 kids - own not only the home I am at right now typing this, but also 4 others. So not paying rent or mortgage.

I am sorry that your zero useful content posts are responded to condescendingly - but that is all they warrant. Maybe you can put up that screen shot of a post I made, and when I responded quite a few people told you you needed to stop already. Not calling anyone names, little buddy - you do need to clean the basement, you are the brat that has that job.

Have a wonderful day, I hope that tomorrow brings some accomplishment that allows you to grow as an individual and finally start making posts that are not just reactionary posts to something that I have posted.

WaltC - Friday, June 19, 2020 - link

Interesting...mentioning Intel's "custom" non-SKU versions is fine, but AMD does exactly the same thing...;) Also, it sure looks as if TSMC is 5-7 years ahead of Intel fabrication at the moment. That's an amazing leap forward, imo.sing_electric - Friday, June 19, 2020 - link

TSMC's definitely ahead but I'm not sure that it's by that much. It's pretty obvious that Intel repeatedly shot itself in the foot with 10nm, but from the last update I know of (March) their 7nm was still on track for 2021, which will put it at ROUGH parity with TSMC's 5nm.And past a point, arguing that a comparable process from Intel or TSMC is better than the other is kind of a fool's errand - you can't just say that just because say, EUV is used in X layers its better, or that the denser one wins: One company might decide to dial down density or increase the size of certain gates in libraries because they think it'll ultimately enable the best designs. You've also got to consider frequency scaling, yields and cost, and no one has hard numbers for those from both companies.

Deicidium369 - Saturday, June 20, 2020 - link

Intel has worked out the issues with Cobalt - not just minor features, but entire conductive layers - neither TSMC nor Samsung have even begun.Intel 7nm will be FULL EUV - ALL 12-15 layers will be EUV - nothing on TSMC roadmap has them doing full EUV / no DUV.

But yeah, Intel traditionally had 3 variants of each node - 1 was frequency optimized, 1 was density optimized and 1 was power optimized - wasn't uncommon to get 2 in one product.

Skylake is most definitely frequency optimized - and the most recent 14nm iteration is significantly denser than the 1st 14nm iteration.

Agree on the yields - Intel announced couple years ago that it's 2018 10nm (10nm-?) had major yield issues - which somehow follows them to 10nm and 10nm+ - but that's mostly the fanboys. Intel's issue with 10 was that instead of trying for a ~2x density, they tried and failed to skip a generation with a 2.7x. 10nm is 2X and 10nm+ is that 2.7x density increase - boneheaded mistake to be sure - and in the mean time they could not produce 14nm fast enough to meet demand.