Intel Launches Cooper Lake: 3rd Generation Xeon Scalable for 4P/8P Servers

by Dr. Ian Cutress on June 18, 2020 9:00 AM ESTSocket, Silicon, and SKUs

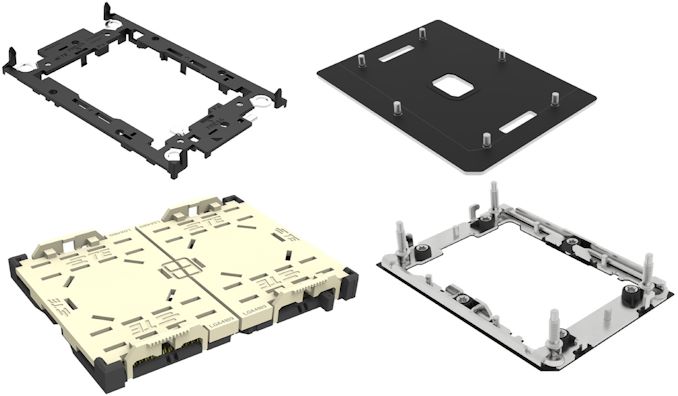

Cooper Lake Xeon Scalable ushers in a new socket, given that it is difficult to add in UPI links without adding additional pins. The new socket is known as LGA4189, for which there will be two variants: LGA4189-4 and LGA4189-5. When asked, Intel stated that Cooper Lake supports the LGA4189-5 socket, however when we asked an OEM about the difference between the sockets, we were told it comes down to the PCIe version.

LGA4189-5, for Cooper Lake, uses PCIe 3.0. LGA4189-4, which is for Ice Lake we were told, will be PCIe 4.0 Nonetheless, Intel obfuscates the difference by calling both of them ‘Socket P+’. It’s not clear if they will be interchangeable, given that technically PCIe 4.0 can work in PCIe 3.0 mode, and a PCIe 3.0 chip can work in a PCIe 4.0 board at PCIe 3.0 speeds, but it will come down to how the UPI links are distributed, and any other differences.

We've since been told that the design of the socket is meant to make sure that Ice Lake Xeon processors should not be placed in Cooper Lake systems, however Cooper Lake processors will be enabled in systems built for Ice Lake.

We’re unsure if that means that LGA4189 / Socket P+ will be a single generation socket or not. Sapphire Rapids, mean to be the next generation Xeon Scalable, is also set for 2nd gen Optane support, which could imply a DDR4 arrangement. If Sapphire Rapids supports CXL, then that’s a PCIe 5.0 technology. There’s going to be a flurry of change within Intel’s Xeon ecosystem it seems.

On the silicon side, Intel has decided to not disclose the die configurations for Cooper Lake. In previous generations of Xeon and Xeon Scalable, Intel would happily publish that it used three different die sizes at the silicon level to separate up the core count distribution. For Cooper Lake however, we were told that ‘we are not disclosing this information’.

I quipped that this is a new level of secrecy from Intel.

Given that Cooper Lake will be offered in variants from 16 to 28 cores, and is built on Intel’s 14nm class process (14+++?), we can at least conclude there is a ’28 core XCC’ variant. Usually on these things the L3 cache counts are a good indicator of something smaller is going to be part of the manufacturing regime, however each processor sticks to the 1.375 MB of L3 cache per core configuration.

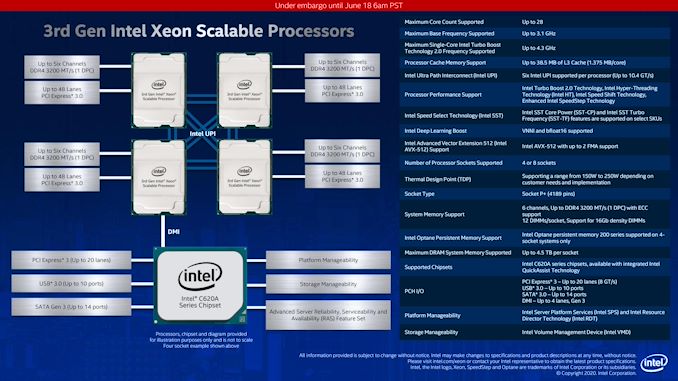

This leads us onto the actual processors being launched. Intel is only launching Platinum 8300, Gold 6300, and Gold 5300 versions of Cooper Lake, given that its distribution is limited to four socket systems or greater, and to high scale OEMs only. TDPs start at 150-165 W for the 16-24 core parts, moving up to 205-250 W for the 18-28 core parts. The power increases come from a combination of slight frequency bumps, higher memory speed support, and double the UPI links.

| Intel 3rd Gen Xeon Scalable Cooper Lake 4P/8P |

||||||||||

| AnandTech | Cores | Base Freq |

1T Turbo |

DDR4 1DPC |

DDR4 2DPC |

DDR4 TiB |

TDP W |

4P 8P |

Intel SST |

Price |

| Xeon Platinum 8300 | ||||||||||

| 8380HL | 28C | 2900 | 4300 | 3200 | 2933 | 4.5 | 250 | 8P | No | $13012 |

| 8380H | 28C | 2900 | 4300 | 3200 | 2933 | 1.125 | 250 | 8P | No | $10009 |

| 8376HL | 28C | 2600 | 4300 | 3200 | 2933 | 4.5 | 205 | 8P | No | $11722 |

| 8376H | 28C | 2600 | 4300 | 3200 | 2933 | 1.12 | 205 | 8P | No | $8719 |

| 8354H | 18C | 3100 | 4300 | 3200 | 2933 | 1.12 | 205 | 8P | No | $3500 |

| 8353H | 18C | 2500 | 3800 | 3200 | 2933 | 1.12 | 150 | 8P | No | $3003 |

| Xeon Gold 6300 | ||||||||||

| 6348H | 24C | 2300 | 4200 | - | 2933 | 1.12 | 165 | 4P | No | $2700 |

| 6328HL | 16C | 2800 | 4300 | - | 2933 | 4.5 | 165 | 4P | Yes | $4779 |

| 6328H | 16C | 2800 | 4300 | - | 2933 | 1.12 | 165 | 4P | Yes | $1776 |

| Xeon Gold 5300 | ||||||||||

| 5320H | 20C | 2400 | 4200 | - | 2933 | 1.12 | 150 | 4P | Yes | $1555 |

| 5318H | 18C | 2500 | 3800 | - | 2933 | 1.12 | 150 | 4P | No | $1273 |

| All CPUs have Hyperthreading | ||||||||||

Quite honestly, Intel's naming scheme is getting more difficult to follow. Every generation of Xeon Scalable becomes a tangled mess of feature separation.

No prices are attached to any of the Cooper Lake processors from our briefings, but Intel did publish them in its price document. We can compare the top SKUs from the previous generations, as well as against AMD's best.

| Intel Xeon 8x80 Compare | ||||

| Xeon 8180M |

Xeon 8280L |

Xeon 8380HL |

AnandTech | EPYC 7H12 |

| Skylake | Cascade | Cooper | Platform | Rome |

| 14nm | 14+ nm | 14++ nm? | Node | 7nm + 14nm |

| $13011 | $13012 | $13012 | Price | ~$8500 |

| 28 C | 28 C | 28 C | Cores | 64 C |

| 2500 MHz | 2700 MHz | 2900 MHz | Base | 2600 MHz |

| 3800 MHz | 4000 MHz | 4300 MHz | 1T Turbo | 3300 MHz |

| 6 x 2666 | 6 x 2933 | 6 x 3200 | DDR4 | 8 x 3200 |

| 1.5 TiB DDR4 | 4.5 TiB Optane | 4.5 TiB Optane | Max Mem | 4 TiB DDR4 |

| 205 W | 205 W | 250 W | TDP | 280 W |

| 1P to 8P | 1P to 8P | 1P to 8P | Sockets | 1P, 2P |

| 3 x 10.4 GT/s | 3 x 10.4 GT/s | 6 x 10.4 GT/s | UPI/IF | 64 x PCIe 4.0 |

| 3.0 x48 | 3.0 x48 | 3.0 x48 | PCIe | 4.0 x128 |

| AVX-512 F/CD/BW/DQ |

AVX-512 F/CD/BW/DQ + VNNI |

AVX-512 F/CD/BW/DQ + VNNI +BF16 |

AVX | AVX2 |

The new processor improves on base frequency by +200 MHz and turbo frequency by +300 MHz, but it does have that extra 45 W TDP.

Compared to AMD’s Rome processors, the most obvious advantages to Intel are in frequency socket support, the range of vector extensions supported, and also memory capacity if we bundle in Optane. AMD’s wins are in has core counts, price, interconnect, PCIe count, and memory bandwidth. However, the design of Intel’s Cooper Lake with BF16 support is ultimately for customers who weren’t looking at AMD for those workloads.

We should also point out that these SKUs are the only ones Intel is making public. As explained in previous presentations, more than 50% of Intel's Xeon sales are actually custom versions of these, with different frequency / L3 cache / TDP variations that the big customers are prepared to pay for. In Intel's briefing, some of the performance numbers given by its customers are based on that silicon, e.g. 'Alibaba Customized SKU'. We never tend to hear about these, unfortunately.

Platform

As hinted above, Intel is still supporting PCIe 3.0 with Cooper Lake, with 48 lanes per CPU. The topology will also reuse Intel’s C620 series chipsets, providing 20 more lanes of PCIe 3.0 as well as USB 3.0 and SATA.

Intel did not go into items such as VROC support or improvements for this generation, so we expect support for those to be similar to Cascade Lake.

99 Comments

View All Comments

Deicidium369 - Saturday, June 20, 2020 - link

No one, even someone like me only buying 60, are paying any where near MSRP. and for big customers like FB which would likely install hundreds if not thousands of these systems - the MSRP is irrelevent. ~$11K list - my Q60 order was less than $9K per.The only bad press is from the fanboys.. some of them are editors... So yes, was delayed - yet record revenue - so yeah not a bad deal. Companies like FB don't care what the manufacturing process is - they ask "can it do what I need it to do right now?" And apparently 14nm PCIe3 Cooper Lake does.

schujj07 - Saturday, June 20, 2020 - link

If you are buying 60 hosts @ $9k/host I see a lot of waste. At that cost you aren't getting much in a Xeon. You could save huge amounts of money by reducing your number of hosts and sockets.Deicidium369 - Thursday, June 25, 2020 - link

60 CPUs purchased16 - Engineering workstations - dual socket - single CPU installed

2 - my engineering workstation - dual socket with dual CPU installed

16 - 4 dual node servers - 2 nodes x 2 sockets - primary datacenter (Colorado)

16 - 4 dual node servers - 2 nodes x 2 sockets - secondary datacenter (Dallas)

6 - 3 single node dual socket - flash arrays - 1 at primary, 1 at secondary, 3rd for engineering

4 - dual node server, 2 nodes x 2 sockets - systems used by IT for testing new software

2 are basically spares, today. The 8 CPUs for the SAP server were originally intended for a different purpose - for a possible replacement for my large SGI TP16000 array - which never materialized (the array is the only remaining IB system in the mix - a Mellanox SwitchX-2 SX6710G made the conversion between the 8 40Gb/s IB to 8 40Gb/s Ethernet).

When we moved to SAP, we had no baseline whatsoever - so it went on it's own physical server in our datacenter in Colorado - with a mirror at Level 3 in Dallas. After a year, we decided to virtualize - and after the move to virtual, added the 4th server (nodes 7&8) to the pool - changes made in Colorado are made in Dallas as well.

When we replace the servers in the next ~12 months, the plan is to go back to 6 nodes - whether that is another 2U 2 node configuration, or as individual servers remains to be seen - will most likely be Ice Lake SP to be able to leverage PCIe4 to use dual 100Gb/s Ethernet for the planned network upgrade.

So initially the SAP system was on a dual node, 4 socket total physical server.

The CPUs were $9K per - not the hosts - hosts are servers. I can see why you say hosts - you missed the context.

"The MSRP is irrelevant. ~$11K list - my Q60 order was less than $9K per"

The $11K was in response to flgt post "Unless you're a FB or Intel employee, no one has any idea what the real price they pay for these processors." which was a response to Duncan Macdonald's post about "A 16 core 4 socket 4.5TB Xeon (the 6328HL) has a list price of $4779"

So talking about MSRP/List prices - Duncan made a claim about MSRP prices, flgt responded that very large customers pay less per unit - and I responded with my own experience with purchasing a very small number of CPUs compared to FB prices "even someone like me only buying 60, are paying any where near MSRP."

My post needed to be edited to be "even someone like me only buying 60, are *NOT* paying any where near MSRP

so $9K per CPU - not $9K per host. You missed the context.

You need to try to be more civil - the constant effort to refute everything I say is fine - but you also need to understand the context, rather than immediately sniping. I have no problem debating the merits of whatever - but the mindless / reactionary responses from you and people like Korguz need to stop. He never offers anything to the conversation and just lies in wait - with probable screen captures to try and make his point - which is "I suck".

Sorry that I didn't choose your preference for my systems - sorry that you and others feel attacked whenever someone states the facts about AMD. I prefer Intel (along with 95% of the server market). When you are putting together a PO to buy the servers and switches, etc for your business - you can choose what you wish, and what your budget will allow.

I have 10 people in my IT department, with over 200+ years of experience between us. The decision for hardware are not made on the fly. Other than now having a 7th and 8th node that is not needed, I have been pretty happy with the decisions we have made. Business continuity and performance were our primary goals, and both were met. The opinions held by posters on a tech forum do not come into play

schujj07 - Saturday, June 20, 2020 - link

How many of the $9k hosts are your SAP HANA hosts?Deicidium369 - Saturday, June 20, 2020 - link

thing is 4 socket motherboards for Intel exist - they don't for Epyc.If you are buying a 4 socket Intel system - you would buy either and Inspur or a Supermicro - which would be the motherboard, case, those "special power supplies" etc...

Those "special power supplies" are redundant and hot swap - something companies like Sun, SGI, Cisco and every single OEM have had for ages - they are considered STANDARD - not special.

brucethemoose - Thursday, June 18, 2020 - link

So I guess the use case is training on enormous datasets that don't fit into the VRAM of a GPU/AI accelerator?xenol - Thursday, June 18, 2020 - link

It looks like that, plus using Optane offers very fast persistent storage which depending on bandwidth needs can replace DRAM. Either way, having a large amount of very fast storage vs. a split between DRAM and secondary storage seems to have a benefit if you believe Intel's marketing materials.Deicidium369 - Thursday, June 18, 2020 - link

Ever been involved with a very large SAP install? Once the system is in production and needs to be restarted - the amount of time it takes to bring down and back up can take hours and hours - all the while it is not able to be used. Systems like SAP runs entirely out of memory - and so during a reboot, a ton of data needs to be loaded from storage to memory - with NVDIMMs alot of that data can be available with only a cursory check, rather than having to be loaded from relatively slow storage - allowing the system to come up much quicker - even saving a couple hours on a reboot means the business can be back up and running, saving hours on lost productivity. In most large companies - nothing happens without SAP.Intel's marketing materials are based on having 95% market share in the Datacenter and a long relationship with businesses and their needs. So not like they are trying to cram on more cores to convince businesses that is what they need - and making few sales.

Duncan Macdonald - Thursday, June 18, 2020 - link

A PCIe 4.0 NVMe drive can easily transfer over 250GB/Min so each terabyte of persistent (Optane or equivalent) memory gives a startup advantage of 4 minutes - hardly a massive advantageDeicidium369 - Thursday, June 18, 2020 - link

Funny admins of extremely large SAP and other ERP installs say otherwise.