Western Digital Unveils IntelliFlash N5100: An Entry-Level All-Flash Storage System

by Anton Shilov on July 19, 2019 12:00 PM EST- Posted in

- SSDs

- Storage

- Western Digital

- HGST

- 3D NAND

- IntelliFlash

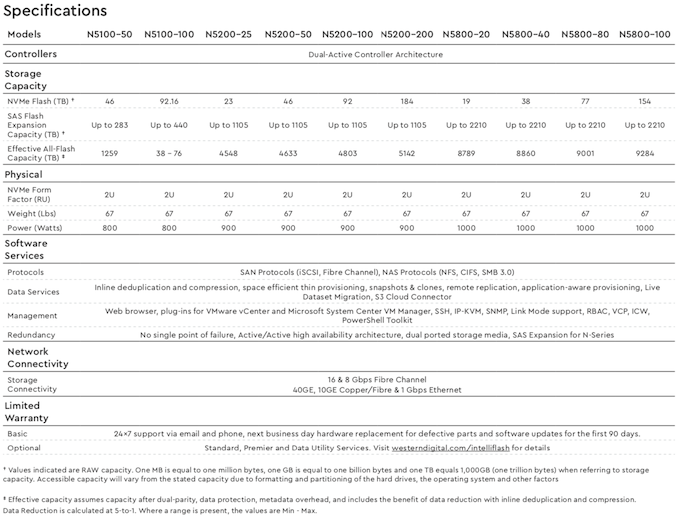

Western Digital has introduced its most affordable NVMe all-flash storage array, the new IntelliFlash N5100. The device offers up to 92 TB of raw NAND flash using SN200 NVMe SSDs, and can be further expanded when needed using additional 2U IntelliFlash SAS modules featuring 24 SAS drive bays, offering hundreds of terabytes of raw NAND flash. The IntelliFlash N5100 is aimed at customers who need to accelerate business applications, but who do not need extreme levels of performance at high prices.

Western Digital’s IntelliFlash N-series NVMe all-flash arrays sit above the company’s IntelliFlash HD-series all-flash and T-series hybrid-flash offering the lowest latency of around 200 µsec and the highest data transfer rates. The N-series family contains three types of arrays: the highest-end N5800, the mainstream N5200, and now the entry-level N5100. The top-of-the-range N5800 offers up to 1.7M sustained IOPS and up to 23 GB/s data throughput, the midrange N5200 provides up to 800K IOPS, whereas the entry-level N5100 features up to 400K sustained IOPS.

| Western Digital's IntelliFlash N-series | |||||

| N5100 | N5200 | N5800 | |||

| Random Read/Write Performance | 400K IOPS | 800K IOPS | 1.7M IOPS | ||

| Sustained Data Transfer Rate | ? | ? | 23 GB/s | ||

| Latency | 200 µsec | 200 µsec | 200 µsec | ||

| Maximum RAW Capacity | 400 TB | 1.4 PB | 2.5 PB | ||

All the Western Digital IntelliFlash N-series all-flash arrays are based on Intel’s Xeon processors, and use WD's dual-active controller architecture in a 2U form-factor. The N5100-series AFAs can be expanded using 2U SAS-based HD-series AFAs that carry SSDs with up to 15.36 TB capacity (Ultrastar DC SS530) and providing up to 368 TB of raw NAND flash (in case of the HD2160 version) as well as 1 ms latency. Meanwhile, the IntelliFlash N5800 can support multiple IntelliFlash HD or 2U or 3U machines for a total raw NAND capacity of up to 2.5 PB.

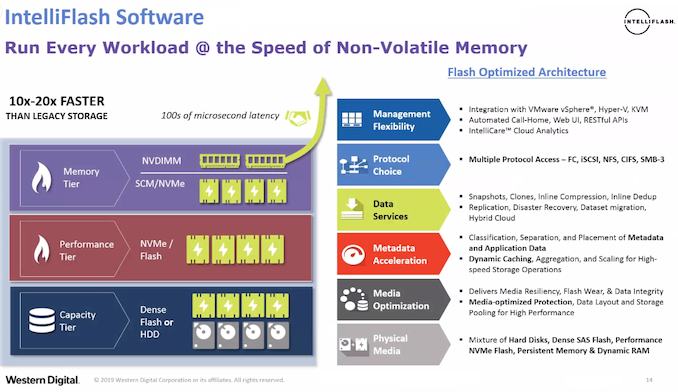

From software standpoint, all the latest IntelliFlash machines run Western Digital’s IntelliFlash OE 3.10 operating system that supports block (FC, iSCSI) as well as file (NFS, CIFS, SMBv3) protocols and therefore are compatible with a variety of software from multiple vendors. Furthermore, the OS fully supports virtualization, data protection, data reduction, inline deduplication, and compression to improve performance, reliability, and increase effective capacity.

Interestingly, the IntelliFlash OE 3.10 adds support for Storage Class Memory as well as NVDIMMs to further boost their performance, but Western Digital does not disclose what kind of SCE and NVDIMMs are currently supported or which future configurations will be supported.

Western Digital’s IntelliFlash N5100 AFAs will be available in the near future. Actual pricing will depend on exact configurations.

Related Reading:

- Toshiba & WD NAND Production Hit By Power Outage: 6 Exabytes Lost

- Toshiba Memory & Western Digital Finalize Fab K1 Investment Agreement

- HGST Ultrastar SS200 SSD: Up to 7.68 TB, 1.8 GB/s, Dual-Port SAS 12 Gbps

- HGST Ultrastar SN200 Accelerator: 7.68 TB Capacity, 6.1 GB/s Read Speed, 1.2M IOPS

- Western Digital to Use RISC-V for Controllers, Processors, Purpose-Built Platforms

- Western Digital’s Acquisition of SanDisk Officially Closes

Source: Western Digital

17 Comments

View All Comments

Dug - Tuesday, July 30, 2019 - link

No, 1.7M IOPS is not entry level in enterprise market.Hamm Burger - Friday, July 19, 2019 - link

Sustained transfer rate of 23GB/s? To what? This is double the capacity of the 100Gb/s fabrics that are just now entering common use. What are they using?Wardrop - Friday, July 19, 2019 - link

Maybe that's combined over multiple interfaces?gfkBill - Saturday, July 20, 2019 - link

32GB/s fibre or 40GB/s iSCSI are referred to alongside 23GB/s in their data sheet, suspect WD are mixing GB and Gb, which is bizarre for a storage company.Hopefully the storage capacity isn't also a tenth of what they claiming!

Dug - Tuesday, July 30, 2019 - link

To itself. You aren't running one application off of this.YB1064 - Tuesday, July 23, 2019 - link

Are you guys going to review one of these?phoenix_rizzen - Thursday, July 25, 2019 - link

Wonder why they went with Xeon CPUs for this. Will there be enough PCIe lanes available to actually use all that glorious NVMe throughput?Although, I guess this just pushes the bottleneck to the network/fabric that connects this thing to the actual compute/processing nodes, so using PCIe switches to share PCIe lanes across NVMe devices won't be an issue.

Would be interesting to see how this would compare to an EPYC-based system, especially one that combines compute and storage into a single server, where all the extra PCIe lanes would really come in handy (no network/fabric to bottleneck you).