The NVIDIA GeForce GTX 1660 Ti Review, Feat. EVGA XC GAMING: Turing Sheds RTX for the Mainstream Market

by Ryan Smith & Nate Oh on February 22, 2019 9:00 AM ESTWolfenstein II: The New Colossus (Vulkan)

id Software is popularly known for a few games involving shooting stuff until it dies, just with different 'stuff' for each one: Nazis, demons, or other players while scorning the laws of physics. Wolfenstein II is the latest of the first, the sequel of a modern reboot series developed by MachineGames and built on id Tech 6. While the tone is significantly less pulpy nowadays, the game is still a frenetic FPS at heart, succeeding DOOM as a modern Vulkan flagship title and arriving as a pure Vullkan implementation rather than the originally OpenGL DOOM.

Featuring a Nazi-occupied America of 1961, Wolfenstein II is lushly designed yet not oppressively intensive on the hardware, something that goes well with its pace of action that emerge suddenly from a level design flush with alternate historical details.

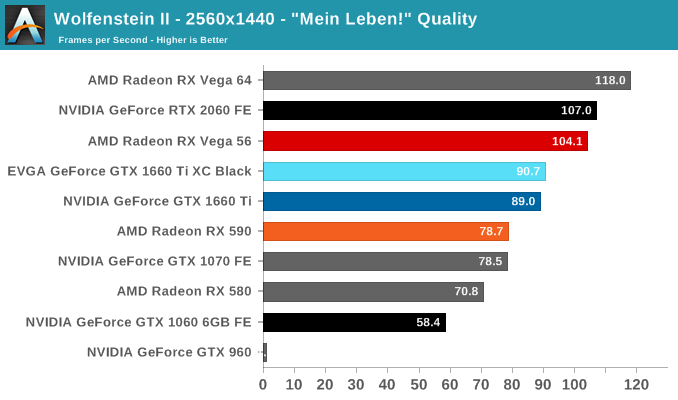

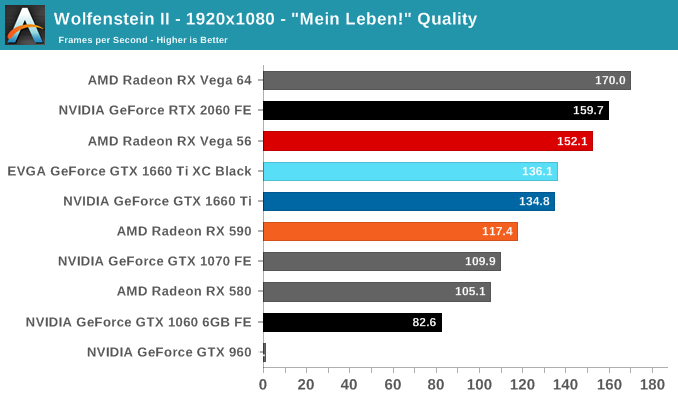

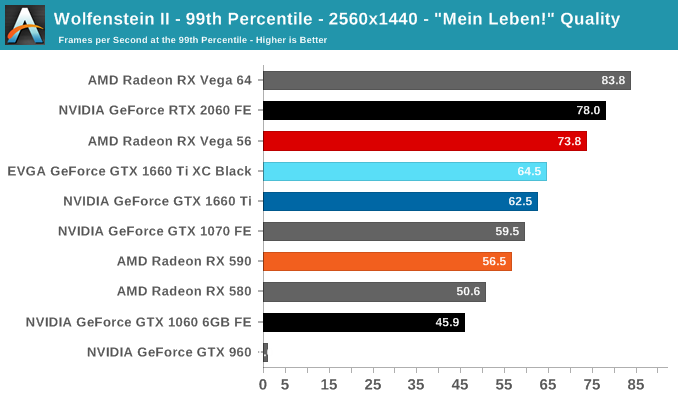

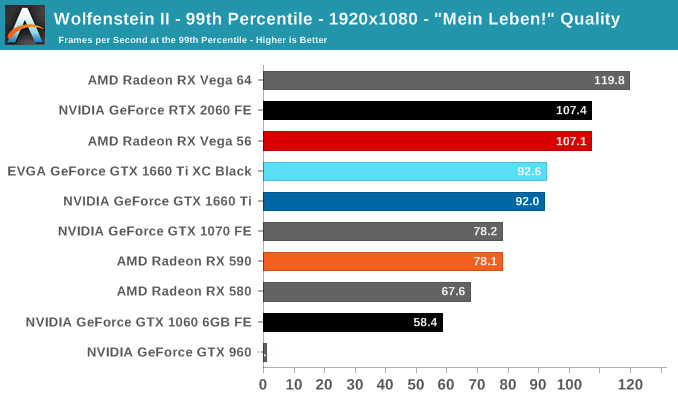

The highest quality preset, "Mein leben!", was used. Wolfenstein II also features Vega-centric GPU Culling and Rapid Packed Math, as well as Radeon-centric Deferred Rendering; in accordance with the preset, neither GPU Culling nor Deferred Rendering was enabled.

As we've seen before, Turing and Vega tend to run well on Wolfenstein II. For our games, these results are actually the closest the RX 590 can get to the GTX 1660 Ti, and even here the GTX 1660 Ti is a solid 13-14% ahead. Here, the GTX 1660 Ti also pulls the biggest lead over the GTX 1060 6GB, coming in at more than 1.5X faster, but also loses to the RX Vega 56 by more than other games.

The 6GB of framebuffer doesn't seem to be holding the GTX 1660 Ti back. The GTX 960's 2GB framebuffer, on the other hand, is asphyxiating.

157 Comments

View All Comments

Midwayman - Friday, February 22, 2019 - link

I feel like they don't realize that until they improve the performance per $$$ there is very little reason to upgrade. I'm happy sitting on an older card until that changes. Though If I were on a lower end card I might be kicking myself for not just buying a better card years ago.eva02langley - Friday, February 22, 2019 - link

Since the bracket price moved up so much for relative performance at higher price point from the last generation, there is absolutely no reason for upgrading. That is different if you need a GPU.zmatt - Friday, February 22, 2019 - link

Agreed. It's kind of wild that I have to pay $350 to get on average 10fps better than my 980ti. If I want a real solid performance improvement I have to essentially pay the same today as when the 980ti was brand new. The 2070 is anywhere between $500-$600 right now depending on model and features. IIRC the 980ti was around $650. And according to Anantech's own benchmarks it gives on average 20fps better performance. That 2 generations, 5 years and I get 20fps for $50 less? No. I should have a 100% performance advantage for the same price by this point. Nvidia is milking us. I'm eyeballing it a bit here but the 2080Ti is a little bit over double the performance of a 980Ti. It should cost less than $700 to be a good deal.Samus - Friday, February 22, 2019 - link

I agree in that this card is a tough sell over a RTX2060. Most consumers are going to spend the extra $60-$70 for what is a faster, more well-rounded and future-proof card. If this were $100 cheaper it'd make some sense, but it isn't.PeachNCream - Friday, February 22, 2019 - link

I'm not so sure about the value prospects of the 2070. The banner feature, real-time ray tracing, is quite slow even on the most powerful Turing cards and doesn't offer much of a graphical improvement for the variety of costs involved (power and price mainly). That positions the 1660 as a potentially good selling graphics card AND endangers the adoption of said ray tracing such that it becomes a less appealing feature for game developers to implement. Why spend the cash on supporting a feature that reduces performance and isn't supported on the widest possible variety of potential game buyers' computers and why support it now when NVIDIA seems to have flinched and released the 1660 in a show of a lack of commitment? Already game studios have ditched SLI now that DX12 pushed support off GPU companies and into price-sensitive game publisher studios. We aren't even seeing the hyped up feature of SLI between a dGPU and iGPU that would have been an easy win on the average gaming laptop due in large part to cost sensitivity and risk aversion at the game studios (along with a healthy dose of "console first, PC second" prioritization FFS).GreenReaper - Friday, February 22, 2019 - link

What I think you're missing is that the DirectX rendering API set by Microsoft will be implemented by all parties sooner or later. It really *does* met a need which has been approximated in any number of ways previously. Next generation consoles are likely to have it as a feature, and if so all the AAA games for which it is relevant are likely to use it.Having said that, the benefit for this generation is . . . dubious. The first generation always sells at a premium, and having an exclusive even moreso; so unless you need the expanded RAM or other features that the higher-spec cards also provide, it's hard to justify paying it.

alfatekpt - Monday, February 25, 2019 - link

I'm not sure about that. It is also an increase in thermals and power consumption that also costs money overtime. RTX advantage is basically null at that point unless you want to play at low FPS so 2060 advantage is 'merely' raw performance.For most people and current games 1160 already offers ultra great performance so not sure if people gonna shell out even more money for the 2060 since 1160 is already a tad expensive.

1160 seems to be an awesome combination of performance and efficiency. Would it be better $50 lower? of course but why? since they don't have real competition from AMD...

Strunf - Friday, February 22, 2019 - link

Why would nvidia give up of a market that costs them almost nothing ? if 5 years from now they do cloud gaming then they pretty much are still doing GPU.Anyways even in 5 years cloud gaming will still be a minor part of the GPU market.

MadManMark - Friday, February 22, 2019 - link

"They are pushing prices up and up but that's not a long term strategy."That comment completely ignores the massive increase in value over both the RX 590 and Vega 56. Nividia produces a card that both makes the RX590 at the same pricepoint completely unjustifiable, and prompts AMD to cut the price of the Vega 56 in HALF overnight, and you are saying that it is *Nvidia* not *AMD* that is charging high prices?!?! I've always thought the AMD GPU fanatics who think AMD delivers more value were somewhat delusional, but this comment really takes the cake.

eddman - Saturday, February 23, 2019 - link

It's not about AMD. The launch prices have clearly been increased compared to previous gen nvidia cards.Even this card is $30 more than the general $200-250 range.