Huawei & Honor's Recent Benchmarking Behaviour: A Cheating Headache

by Andrei Frumusanu & Ian Cutress on September 4, 2018 8:59 AM EST- Posted in

- Smartphones

- Huawei

- SoCs

- Benchmarks

- honor

- Kirin 970

Section By Ian Cutress & Andrei Frumusanu

Does anyone remember our articles regarding unscrupulous benchmark behavior back in 2013? At the time we called the industry out on the fact that most vendors were increasing thermal and power limits to boost their scores in common benchmark software. Fast forward to 2018, and it is happening again.

Benchmarking Bananas: A Recap

cheat: verb, to act dishonestly or unfairly in order to gain an advantage.

AnandTech exposing benchmark cheating on smartphones has a long and rich history. It is quite apt that this story goes full circle, as the one to tip off Brian on Samsung’s cheating behaviour on the Exynos Galaxy S4 a few years back was Andrei, who now writes for us.

When we exposed one vendor, it led to a cascade of discussions and a few more articles investigating more vendor involved in the practice, and then even Futuremark delisting several devices from their benchmark database. Scandal was high on the agenda, and the results were bad for both companies and end users: devices found cheating were tarnishing the brand, and consumers could not take any benchmark data as valid from that company. Even reviewers were misled. It was a deep rabbit hole that should not have been approached – how could a reviewer or customer trust what number was coming out of the phone if it was not in a standard ‘mode’?

So thankfully, ever since then, vendors have backed off quite a bit on the practice. Since 2013, for several years it would appear that a significant proportion of devices on the market are behaving within expected parameters. There are some minor exceptions, mostly from Chinese vendors, although this comes in several flavors. Meizu has a reasonable attitude to this, as when a benchmark is launched the device puts up a prompt to confirm entering a benchmark power mode, so at least they’re open and transparent about it. Some other phones have ‘Game Modes’ as well, which either focus on raw performance, or extended battery life.

Going Full Circle, At Scale

So today we are publishing two front page pieces. This one is a sister article to our piece addressing Huawei’s new GPU Turbo, and while it makes overzealous marketing claims, the technology is sound. Through the testing for that article, we actually stumbled upon this issue, completely orthogonal to GPU turbo, which needs to be published. We also wanted to address something that Andrei has come across while spending more time with this year’s devices, including the newly released Honor Play.

The Short Detail

As part of our phone comparison analysis, we often employ additional power and performance testing on our benchmarks. While testing out the new phones, the Honor Play had some odd results. Compared to the Huawei P20 devices tested earlier in the year, which have the same SoC, the results were also quite a bit worse and equally weird.

Within our P20 review, we had noted that the P20’s performance had regressed compared to the Mate 10. Since we had encountered similar issues on the Mate 10 which were resolved with a firmware update pushed to me, we didn’t dwell too much on the topic and concentrated on other parts of the review.

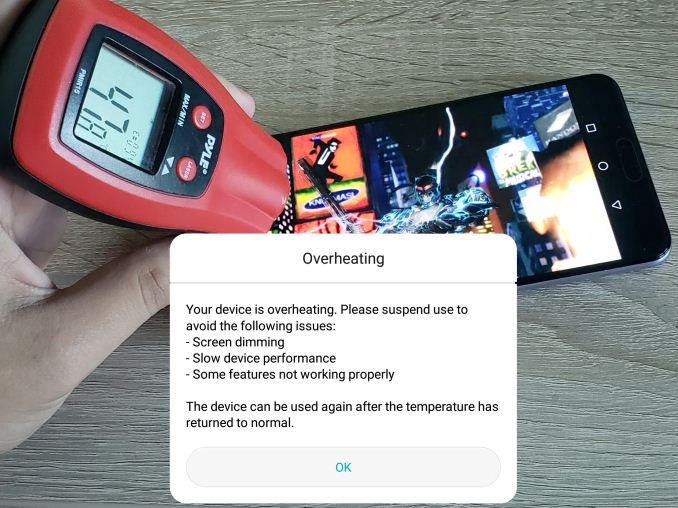

Looking back at it now after some re-testing, it seems quite blatant as to what Huawei and seemingly Honor had been doing: the newer devices come with a benchmark detection mechanism that enables a much higher power limit for the SoC with far more generous thermal headroom. Ultimately, on certain whitelisted applications, the device performs super high compared to what a user might expect from other similar non-whitelisted titles. This consumes power, pushes the efficiency of the unit down, and reduces battery life.

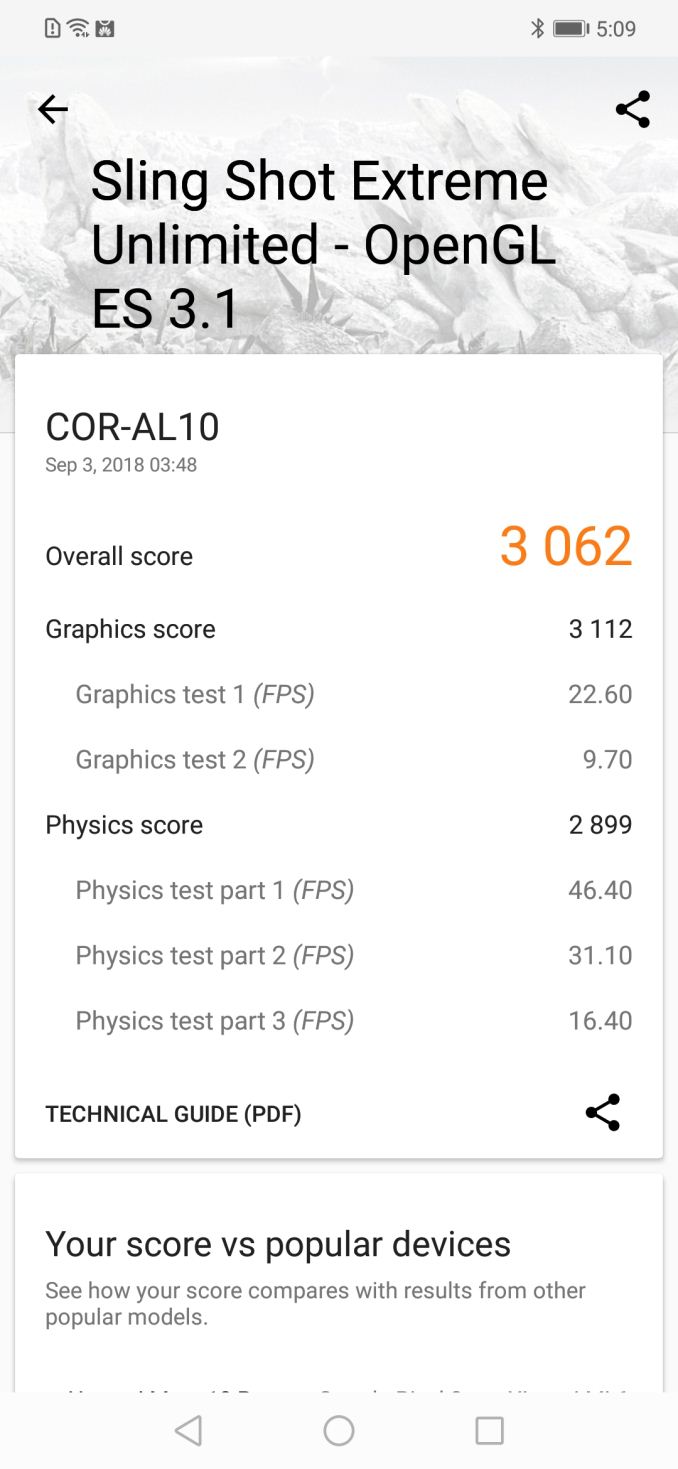

This has knock-on effects, such as trust, in how the device works. The end result is a single performance number is higher, which is good for marketing, but is unrealistic to any user with the device. The efficiency of the SoC also decreases (depending on the chip), as the chip is pushed well outside its standard operating window. It makes the SoC, one of the differentiating points of the device, look worse, all for the sake of a high benchmark score. Here's the example of benchmark detection mode on and off on the Honor Play:

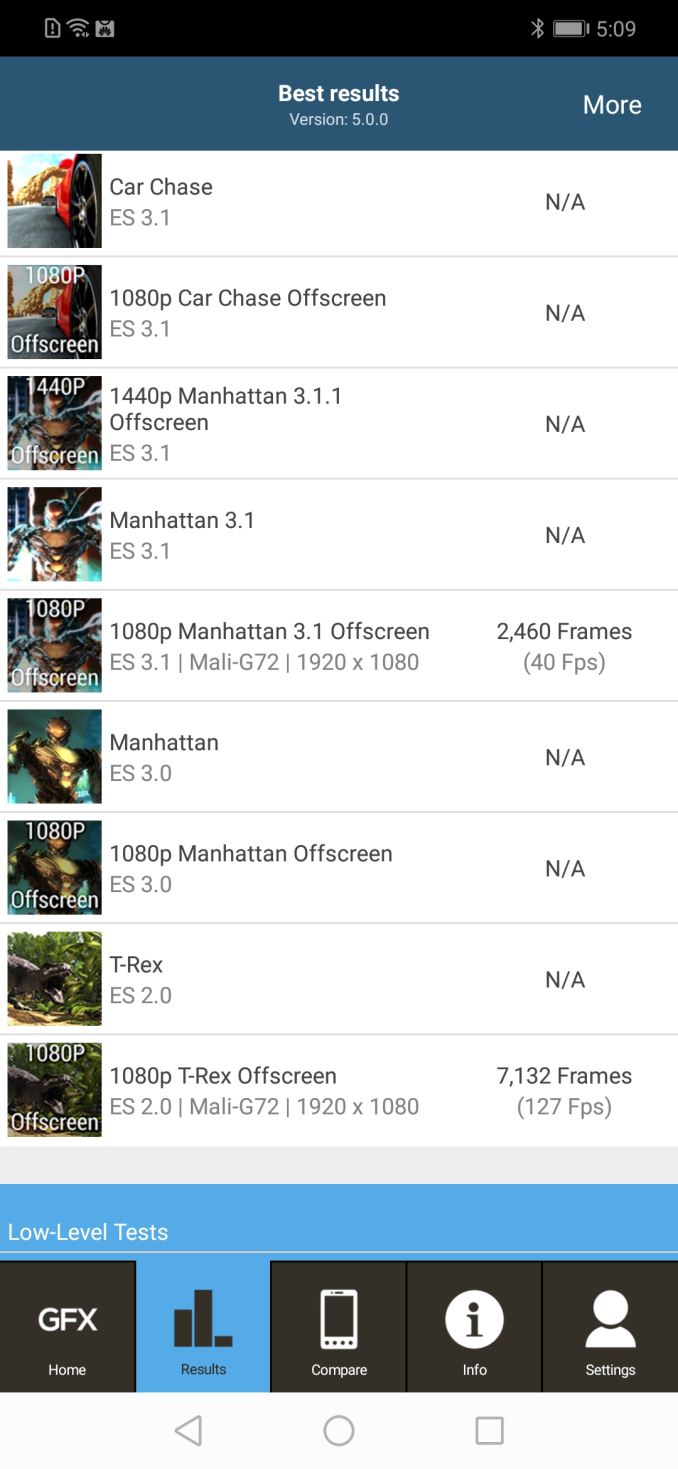

| GFXBench T-Rex Offscreen Power Efficiency (Total Device Power) |

||||

| AnandTech | Mfc. Process | FPS | Avg. Power (W) |

Perf/W Efficiency |

| Honor Play (Kirin 970) BM Detection Off | 10FF | 66.54 | 4.39 | 15.17 fps/W |

| Honor Play (Kirin 970) BM Detection On | 10FF | 127.36 | 8.57 | 14.86 fps/W |

We’ll go more into the benchmark data on the next page.

We did approach Huawei about this during the IFA show last week, and obtained a few comments worth putting here. Another element to the story is that Huawei’s new benchmark behavior very much exceeds anything we’ve seen in the past. We use custom editions of our benchmarks (from their respective developers) so we can test with this ‘detection’ on and off, and the massive differences in performance between the publicly available benchmarks and the internal versions that we’re using for testing is absolutely astonishing.

Huawei’s Response

As usual with investigations like this, we offered Huawei an opportunity to respond. We met with Dr. Wang Chenglu, President of Software at Huawei’s Consumer Business Group, at IFA to discuss this issue, which is purely a software play from Huawei. We covered a number of topics in a non-interview format, which are summarized here.

Dr. Wang asked if these benchmarks are the best way to test smartphones as a whole, as he personally feels that these benchmarks are moving away from real world use. A single benchmark number, stated Huawei’s team, does not show the full experience. We also discussed the validity of the current set of benchmarks, and the need for standardized benchmarks. Dr. Wang expressed his preference for a standardized benchmark that is more like the user experience, and they want to be a part of any movement towards such a benchmark.

I explained that we work with these benchmark companies, such as Kishonti (GFXBench) and Futuremark (3DMark), as well as others, to help steer them in a way that is better represented for benchmarking. We explained employing a benchmarking mode to game test results is not a solution to solving what they see as a misrepresentation of user experience with these benchmarks. This is especially valid when the chip ends up with lower efficiency – but to be honest with test: the only way for it to be better related to user experience is to run it in the standard power envelope that every regular game runs in.

Huawei stated that they have been working with industry partners for over a year to find the best tests closest to the user experience. They like the fact that for items like call quality, there are standardized real-world tests that measure these features that are recognized throughout the industry, and every company works towards a better objective result. But in the same breath, Dr. Wang also expresses that in relation to gaming benchmarking that ‘others do the same testing, get high scores, and Huawei cannot stay silent’.

He states that it is much better than it used to be, and that Huawei ‘wants to come together with others in China to find the best verification benchmark for user experience’. He also states that ‘in the Android ecosystem, other manufacturers also mislead with their numbers’, citing one specific popular smartphone manufacturer in China as the biggest culprit, and that it is becoming ‘common practice in China’. Huawei wants to open up to consumers, but have trouble when competitors continually post unrealistic scores.

Ultimately Huawei states that they are trying to face off against their major Chinese competition, which they say is difficult when other vendors put their best ‘unrealistic’ score first. They feel that the way forward is standardization on benchmarks, that way it can be a level field, and they want the media to help with that. But in the interim, we can see that Huawei has also been putting its unrealistic scores first too.

Our response to this is that Huawei needs to be a leader, not a follower on this issue. I explained that the benchmarks we use (GFXBench) are well understood and are ‘standard’, and as real world as possible, but there are benchmarks we don’t use (AnTuTu) because they don’t mean anything. We also use benchmarks such as SPEC, which are very standard in this space, to evaluate an SoC and device.

The discussion then pivoted towards the decline in trust Huawei’s benchmark numbers in presentations as a result of this. We already take the data with a large grain of salt, but now we have no reason to listen to them as we do not know which values are in this ‘benchmark’ mode.

Huawei’s reaction to this is that they will ensure that future benchmark data in presentations is independently verified by third parties at the time of the announcement. This was the best bit of news.

Our Reaction

While not explicitly stated in a clear line, Huawei is admitting to doing what they are doing, citing specific vendors in China as the primary reason for it.

We understand the impact that higher marketing numbers, however this is the worst way to do it – rather than calling out the competition for bad practices, Huawei is trying to beat them at their own game, and it’s a game in which everyone loses. For a company the size of Huawei, brand image is a big part of what the company is, and trying to mislead customers just for a high-score will backfire. It has backfired.

Huawei’s comments about standardized benchmarking are not new – we’ve heard it since time immemorial in the PC space, and several years ago, Arm was similarly discussing it with the media. Since then the situation has gotten better: the canned benchmark companies speak with game developers to develop real-world scenarios, but they also want to push the boundaries.

The only thing that hasn’t happened in the mobile space compared to the PC space on this is proper in-game benchmark modes that output data properly. This is something that is going to have to be vendor driven, as our interactions with big gaming studios on in-game benchmarks typically falls flat. Any frame rate testing on mobile requires additional software, which can require root, however Huawei recently disabled the ability to root their phones. Though we're told that that at some point in the future, Huawei will be re-enabling rooting for registered developers soon.

Overall, while it’s positive that Huawei is essentially admitting to these tactics, we believe the reasons for doing so are flimsy at best. The best way to implement this sort of ‘mode’ is to make it optional, rather than automatic, as some vendors in China already do. But Huawei needs to lead from the front if it ever wants to approach Samsung in unit sales.

Huawei did not go into how the benchmarking detection will be addressed in current and future devices. We will come back to the issue for the Mate 20 launch on October 16th.

84 Comments

View All Comments

beginner99 - Wednesday, September 5, 2018 - link

The most interesting aspect is that it shows that ARM also struggles with power once they get into x86 performance area. No free lunch. And I wonder how the other devices cheat. Probably most due somehow. Huawei just wan't that clever.ncsaephanh - Wednesday, September 5, 2018 - link

Great work on this piece. I really appreciate good journalism giving light to industry issues while having the technical expertise to dive deep and explain everything in a concise manner. And I wouldn't worry about catching this earlier, what's important is we know now. And hopefully at least some consumers now won't fall for the marketing/benchmarking hype.yhselp - Wednesday, September 5, 2018 - link

The GFXBench T-Rex Offscreen Power Efficiency benchmark in the Kirin 970 piece still shows the cheating result for the Mate 10.It's astonishing to see the difference in sustained performance cooling alone can attribute for - P20 Pro and Honor Play have the same maker, same SoC, similar dimentions, and yet, the performance is quite different.

Hyper72 - Wednesday, September 5, 2018 - link

I thought that ever since Samsung was caught doing the same thing in 2013 you put in active countermeasures (randomly named benchmark software, etc.) or at least a test for cheating as a standard part of your setup?tommo1982 - Wednesday, September 5, 2018 - link

These tests show similar behavior with iPhone. It's not any faster than the other leading brands. The difference between peak and sustained is huge. Same goes for Samsung and Xiaomi.I understand why the UI seems so fast and responsive, and why many people complained about the performance. It just can't stay at peak forever.

eastcoast_pete - Thursday, September 6, 2018 - link

To clarify up front: I don't own or like iOS devices. However, I have to give Apple its due here: the idea of really high, short burst performance coupled with okay longer-term speed is pretty much what I (and probably many other mobile users) want in smartphones. This is useful for multitasking while opening multiple browser windows etc., i.e. scenarios that really benefit from well above-normal CPU/GPU speeds for the few seconds, resulting in a fluid user experience. This is different from running the SoC to heat exhaustion and shutdown whenever a benchmarking app is recognized. Some current Android flagships are sort-of able to do that short burst ("turbo" in PCs) also, but none has yet the (momentary) peak performance of Apple's wide and deep cores. The Mongoose M3 was an attempt, the Kirin 980 was an apparent step towards this, sort of, but is now marred by this benchmark cheating BS. Let's see what QC can cook up, they tend to get closest to Apple's top SoC.techconc - Monday, September 10, 2018 - link

Thermal throttling happens on ALL phones. That's not what's in question. The issue is with companies that artificially white list specific benchmarks in order to achieve results that would not be seen in real applications.To that end, Anandtech's battery tests have always demonstrated the difference between peak and sustained performance in mobile devices. Up through the iPhone 6s, there was very little throttling going on with iPhones on peak loads. To your point, the level of throttling in iPhones has been approaching practices of common Android equivalents.

psychobriggsy - Thursday, September 6, 2018 - link

Naughty. Makes running a benchmark in a 'loop mode' until the battery runs out very important IMO. If the device dies in an hour in benchmarks, but 3 hours elsewhere, then you know something's awry.However there is a potential positive - it shows that the Kirin 970 can perform well at higher power consumption - there's no performance wall between 3.5W and 9W, and the perf/W scales fairly well too.

So - why not look into a 'docked' mode option in the future? One option could be a Switch-like dock, using external power (to protect the battery), optional cooling assistance, HDMI out to a TV, provide a controller in this pack as well, and allow the SoC to run as fast as this setup can keep the device from damaging itself. That's flippin' marketable. The dock would cost a few dollars, and it sounds like the software is already there in the main.

Hopefully the Mali G76 in the Kirin 980 actually fixes a lot of the performance issues with Mali, which surely were a factor in this sad situation (also clearly saving money by using a smaller GPU, wide and slow beats narrow and fast for GPUs where power consumption matters.

hanselltc - Friday, September 7, 2018 - link

wut if: the white list includes popular games as well? is that still cheating?s.yu - Monday, September 10, 2018 - link

Obviously you haven't read the article, the so-called whitelisting's performance can't be sustained, it's not as simple as merely activating some sort of game mode automatically.