Huawei’s GPU Turbo: Valid Technology with Overzealous Marketing

by Ian Cutress & Andrei Frumusanu on September 4, 2018 9:00 AM EST- Posted in

- Smartphones

- Huawei

- Mobile

- Benchmarks

- honor

- Neural Networks

- Kirin 970

- AI

The Detailed Explanation of GPU Turbo

Under the hood, Huawei uses TensorFlow neural network models that are pre-trained by the company on a title-by-title basis. By examining the title in detail, over many thousands of hours (real or simulated), the neural network can build its own internal model of how the game runs and its power/performance requirements. The end result can be put into one dense sentence:

Optimized Per-Device Per-Game DVFS Control using Neural Networks

In the training phase, the network analyzes and adjusts the SoC’s DVFS parameters in order to achieve the best possible performance while minimizing power consumption. This entails trying its best to hit the nearest DVFS states on the CPUs, GPU, and memory controllers that still allow for hitting 60fps, yet without going to any higher state than is necessary (in other words, minimizing performance headroom). The end result is that for every unit of work that the CPU/GPU/DRAM has to do or manage, the corresponding hardware block has the perfectly optimized amount of power needed. This has a knock-on effect for both performance and power consumption, but mostly in the latter.

The resulting model is then included in the firmware for devices that support GPU Turbo. Each title has a specific network model for each smartphone, as the workload varies with the title and the resources available vary with the phone model. As far as we understand the technology, on the device itself there appears to be an interception layer between the application and GPU driver which monitors render calls. These serve as inputs to the neural network model. Because the network model was trained to output the DVFS settings that would be most optimal for a given scene, the GPU Turbo mechanism can apply this immediately to the hardware and adjust the DVFS accordingly.

For SoCs that have them, the inferencing (execution) of the network model is accelerated by the SoC’s own NPU. Where GPU Turbo is introduced in SoCs that don’t sport an NPU, a CPU software fall-back is used. This allows for extremely fast prediction. One thing that I do have to wonder is just how much rendering latency this induces, however it can’t be that much and Huawei says they focus a lot on this area of the implementation. Huawei confirmed that these models are all 16-bit floating point (FP16), which means that for future devices like the Kirin 980, further optimization might occur through using INT8 models based on the new NPU support.

Essentially, because GPU Turbo is in effect a DVFS mechanism that works in conjunction with the rendering pipeline and with a much finer granularity, it’s able to predict the hardware requirements for the coming frame and adjust accordingly. This is how GPU Turbo in particular is able to make claims of much reduced performance jitter versus more conventional "reactive" DVFS drivers, which just monitor GPU utilization rate via hardware counters and adapt after-the-fact.

Thoughts After A More Detailed Explanation

What Huawei has done here is certainly an interesting approach with the clear potential for real-world benefits. We can see how distributing resources optimally across available hardware within a limited power budget will help the performance, the efficiency, and the power consumption, all of which is already a careful balancing act in smartphones. So the detailed explanation makes a lot of technical sense, and we have no issues with this at all. It’s a very impressive feat that could have ramifications in a much wider technology space, eventually including PCs.

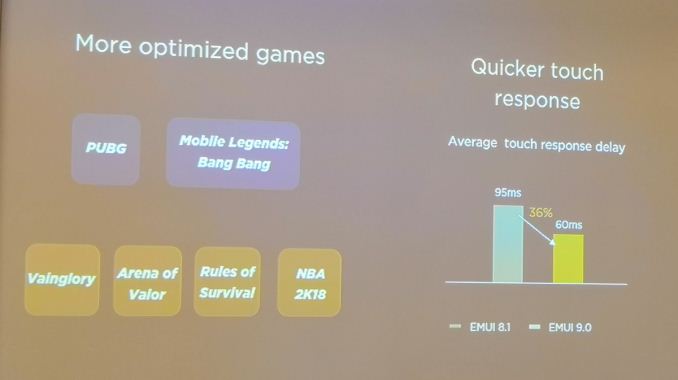

The downside to the technology is the per-device & per-game nature of it. Huawei did not go into detail about long it took to train a single game: the first version of GPU Turbo supports PUBG and a Chinese game called Mobile Legends: Bang Bang. The second version, coming with the Mate 20, includes NBA 2K18, Rules of Survival, Arena of Valor, and Vainglory.

Technically the granularity is per-SoC rather than per-device, although different devices will have different limits in thermal performance or memory performance. But it is obvious that while Huawei is very proud of the technology, it is a slow per-game roll out. There is no silver bullet here – while an ideal goal would be a single optimized network to deal with every game in the market, we have to rely on default mechanisms to get the job done.

Huawei is going after its core gaming market first with GPU Turbo, which means plenty of Battle Royale and MOBA action, like PUBG and Arena of Valor, as well as tie-ins with companies like EA/Tencent for NBA 2K18. I suspect on the back of this realization, some companies will want to get in contact with Huawei to add their title to the list of games to be optimized. Our only request is that you also include tools so we can benchmark the game and output frame-time data, please!

On the next page, we go into our analysis on GPU Turbo with devices on hand. We also come across an issue with how Arm’s Mali GPU (used in Huawei Kirin SoCs) renders games differently to Huawei’s competitor devices.

64 Comments

View All Comments

dave_the_nerd - Tuesday, September 4, 2018 - link

Honor 7x? How about the Mate SE, its Huawei twin? (We have one...)mode_13h - Tuesday, September 4, 2018 - link

> There is no silver bullet here – while an ideal goal would be a single optimized network to deal with every game in the market, we have to rely on default mechanisms to get the job done.Why not use distributed training across a sampling of players (maybe the first to download each new game or patch) and submit their performance data to a cloud-based training service? The trained models could then be redistributed and potentially further refined.

tygrus - Tuesday, September 4, 2018 - link

The benefits seem <10% and don't beat the competition (eg. Samsung S8 to S9 models).Why 7 pages when it could have been done in 3 or 4 pages. The article needed more effort to edit and remove the repetition & fluff before publishing. Maybe I'm having a bad day but it just seemed harder to read than your usual.

LiverpoolFC5903 - Wednesday, September 5, 2018 - link

That is the whole point of the article, to go into depth. If you dont like technical 'fluff', there are hundreds of sites out there with one pagers more to your liking. .zodiacfml - Wednesday, September 5, 2018 - link

I guess, I im not wrong when I first of heard this. I was thinking "dynamic resolution" or image quality reducing feature that works on the fly.s.yu - Friday, September 7, 2018 - link

Technically Huawei isn't directly responsible, you know the article said that all Mali devices run on lower settings.The thing you didn't anticipate is that they were citing numbers compared to Kirin 960, that threw everyone off. Seeing as they're Huawei I knew they were lying somehow, but seeing how Intel put their cross-generation comparisons in big bold print for so long I didn't realize somebody could hide such a fact in small print, or even omit that in most presentations.

Manch - Wednesday, September 5, 2018 - link

<quote> (Ed: We used to see this a lot in the PC space over 10 years ago, where different GPUs would render different paths or have ‘tricks’ to reduce the workload. They don’t anymore.) </quote>How does this not happen anymore? Both Nvidia & AMD create game ready drivers to optimize for diff games. AMD does tend to optimize for Mantle/Vulkan moreso than DX12(Explain SB AMD...WTF?). Regardless these optimizations are meant to extract the best performance per game. Part of that is reducing workload per frame to increase overall FPS, so I don't see how this does not happen anymore.

Ian Cutress - Wednesday, September 5, 2018 - link

Thus comment was more about the days where 'driver optimizations' meant trading off quality for performance, and vendors were literally reducing the level of detail to get better performance. Back in the day we had to rename Quake3 to Quack3 to show the very visible differences that these 'optimizations' had on gameplay.Manch - Wednesday, September 5, 2018 - link

Ah OK, makes sense. I do remember those shenanigans.ThanksManch - Wednesday, September 5, 2018 - link

oops, messed up the quotes....no coffee :/