Exploring DynamIQ and ARM’s New CPUs: Cortex-A75, Cortex-A55

by Matt Humrick on May 29, 2017 12:00 AM EST- Posted in

- Smartphones

- CPUs

- Arm

- Mobile

- Cortex

- DynamIQ

- Cortex A75

- Cortex A55

Cortex-A75 Microarchitecture

The Cortex-A75 is the newest member of ARM’s Sophia family of CPUs, which also includes the A73, A17, and A12. It’s no surprise then that the A75 and A73 have much in common just like the A72 and A57 before them (both of which belong to the Austin CPU family); however, ARM’s focus has shifted from improving power efficiency and thermal headroom for A73 to improving performance and adding new features for A75. ARM addressed its performance goals through significant changes to the pipeline and support for DynamIQ, while the new features are a byproduct of moving from the ARMv8.0 architecture to ARMv8.2. For this article, I’m primarily going to focus on what’s new for A75, so I recommend reading our introduction to the A73 to get a more complete understanding of the A75 microarchitecture.

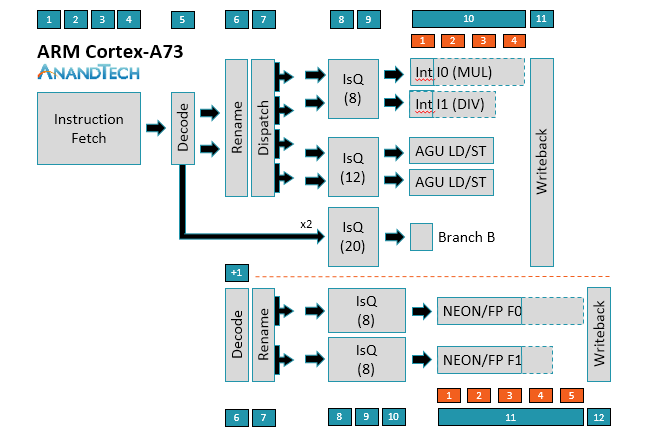

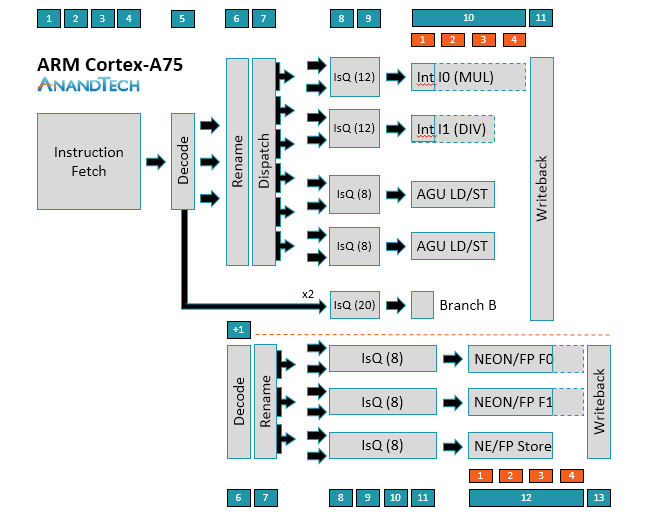

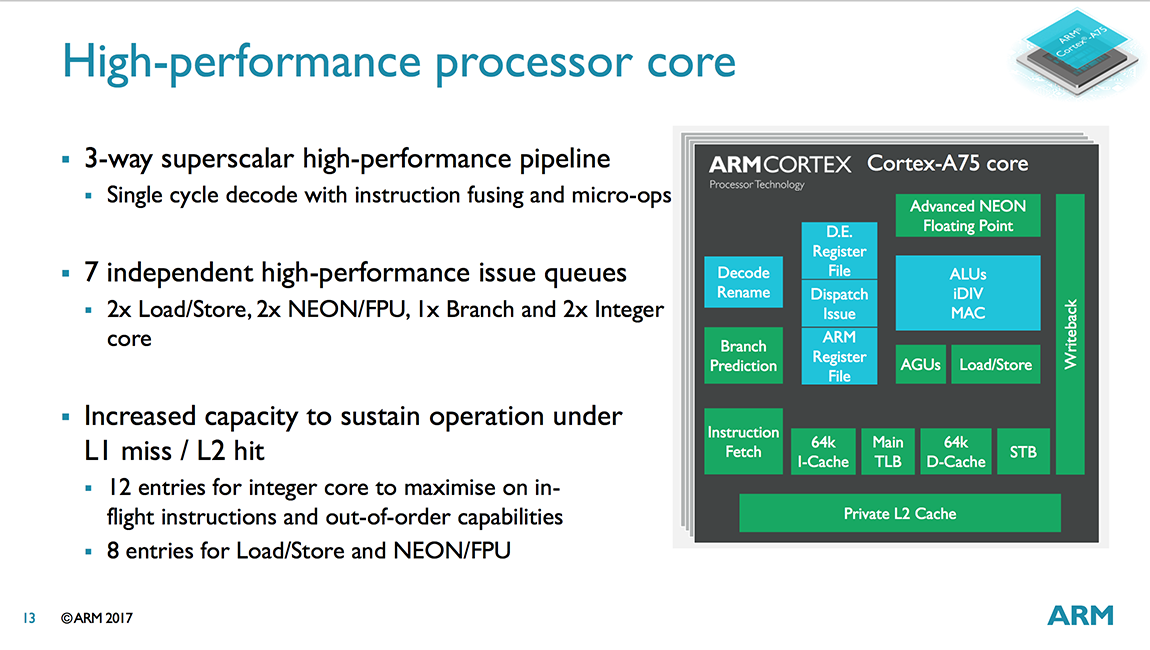

The A75 uses a relatively short 11-13+ stage (depending on instruction type) out-of-order pipeline similar to the A73. Instruction fetch is still 4 stages and the decoder is still able to decode most instructions in a single cycle, with µops destined for the NEON/FP (floating-point) pipelines requiring an additional decode stage; however, moving to 3-wide decode makes the A75 is a wider machine than A73, a big change that will be discussed in greater detail below.

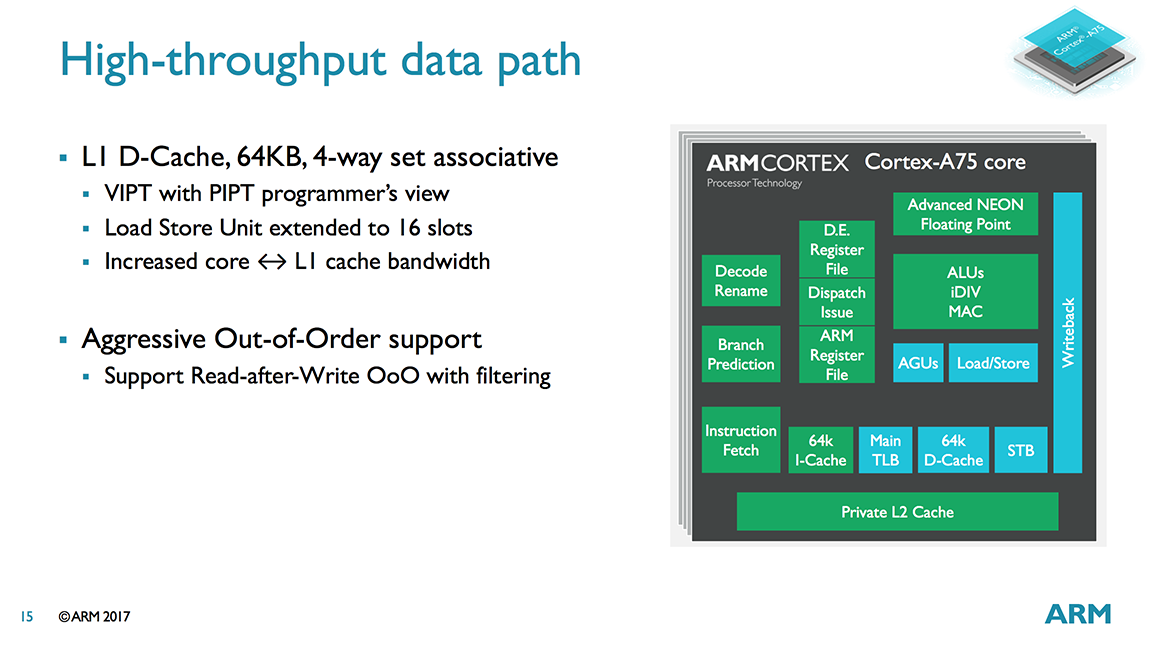

The ability to decode up to 3 instructions/cycle means A75 can now dispatch up to 6 µops/cycle instead of 4 µops/cycle for A73. On the integer side, the A75 can feed up to 2 µops into each issue queue. Instead of shared issue queues for the 2 ALUs and 2 AGUs, each pipe in A75 gets its own issue queue with more entries. This allows the A75 to be more speculative, improving its ability to execute instructions out of order and continue operation during an L1 D-cache miss that hits in L2, for example. The peak issue rate increases to 8 µops/cycle, 1 for each pipe.

As the diagrams for A73/A75 show, simple branch µops can bypass Rename and Dispatch, effectively removing 2 stages of latency; however, more complex branch instructions that require access to registers can spawn additional branch, AGU, and ALU µops that require passing through Rename/Dispatch, with some additional complexity hidden within the Rename stage.

Moving to the NEON/FP side, you’ll notice that there’s no Dispatch stage for A73/A75. Obviously, µops are still being pushed into the issue queues, and there’s still load balancing between queues, but it’s handled differently and one reason why the issue queues are 1-2 stages longer than those on the integer side.

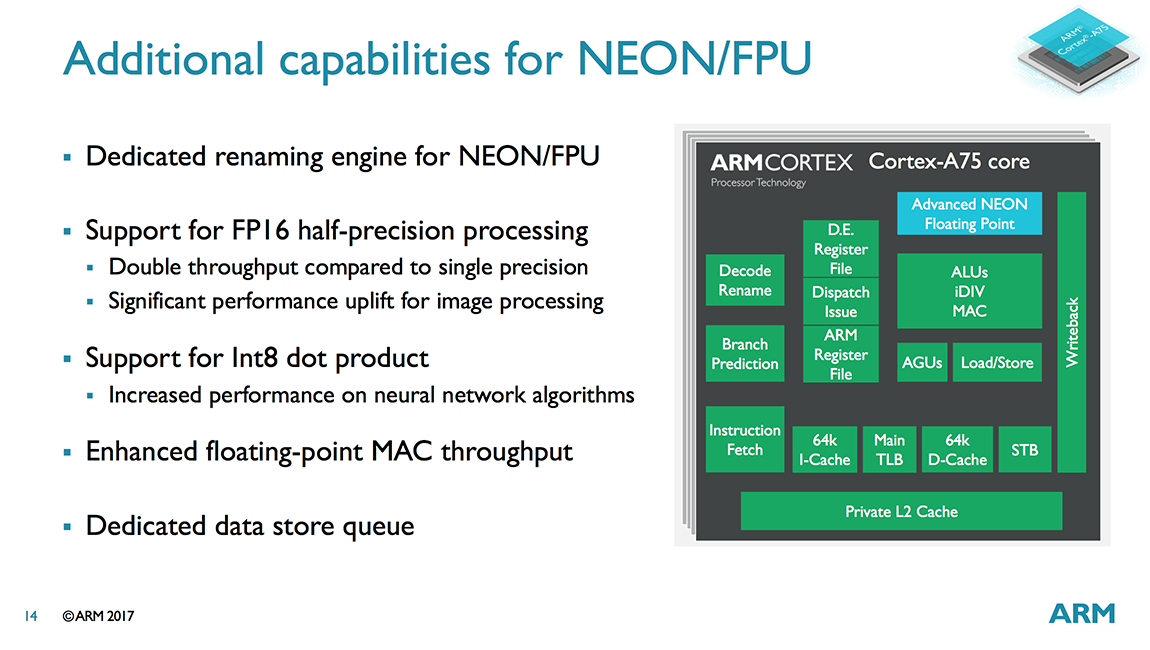

There have been some changes to the NEON/FP side as well. The A75 can now “dispatch” up to 3 µops/cycle and sink up to 2 µops into each issue queue, which grow to 4 stages deep instead of 3 for A73. ARM looked at increasing the number of entries in the issue queues too, but it found this increased power more than performance, so it nixed the idea. Instead it added a dedicated NEON/FP store pipe with its own issue queue. The latency of a FP multiply-accumulate (MAC) has also been reduced to 5 cycles compared to 6 cycles on A73.

I’ll discuss the execution pipelines in greater detail as we work our way through the data path, but let’s start on the instruction side first. The A75 is still a “slot-based microarchitecture,” which was first introduced with the A73. ARM is not disclosing any additional details beyond its basic explanation from last year, namely that there are 8 “slots” that work to eliminate redundant access to resources within the instruction block, which ultimately reduces power consumption.

Both the A73 and A75 have a very simple instruction prefetcher that feeds into a fixed 64KB L1 I-cache that is 4-way set associative and uses a VIPT (Virtually Indexed, Physically Tagged) access scheme, common for L1 caches because of their sensitivity to latency.

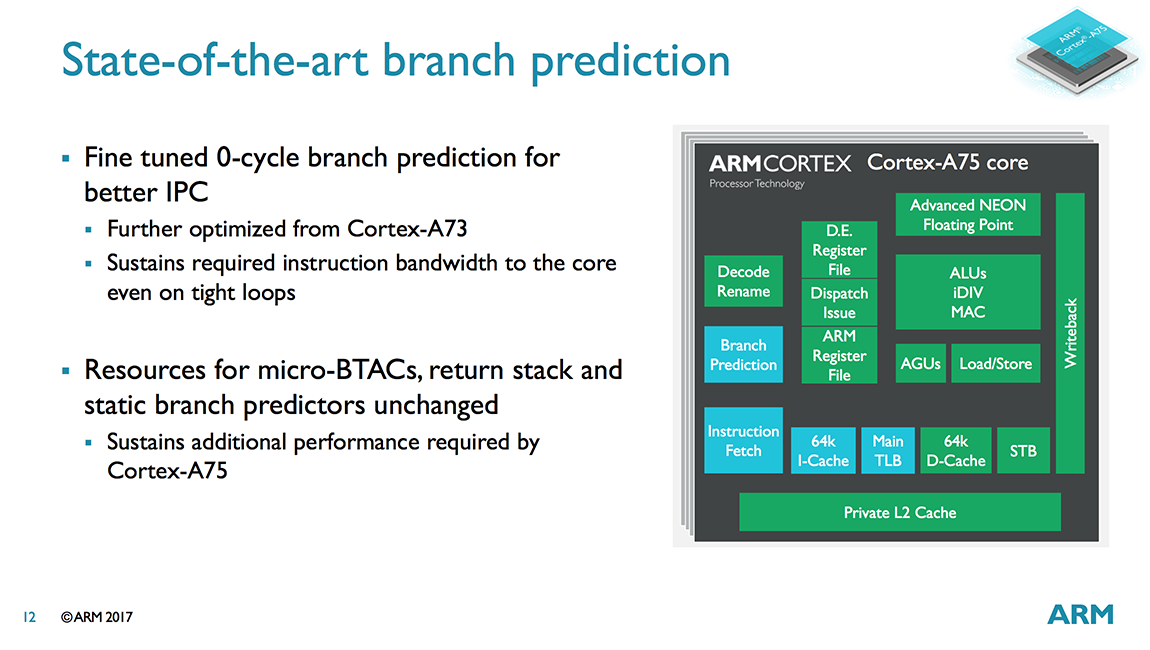

The A73 received a completely new main branch predictor, along with a new 64-entry micro-BTAC for accelerating predictions. In addition to the main predictor, there’s also a static branch predictor, which is used as a fallback when the main predictor has insufficient history, and a return stack, which contains nested subroutine return addresses. An indirect predictor, which is only used when necessary (reducing its power penalty because indirect branches occur less frequently), uses a 2-way 256-entry BTAC (Branch Target Address Cache).

While designing the A75, ARM found that the A73’s branch predictor still performed well and that improving performance further resulted in diminishing returns, with power climbing faster than performance; therefore, the A73’s predictor was carried over to the A75. ARM did fine tune the 0-cycle micro-predictors, which sit upstream of the main predictor, improving IPC by further reducing the likelihood of pipeline bubbles in tight loops.

As I mentioned above, the A75 moves to a 3-wide instruction decode stage, up from 2-wide for the A73 and matching the 3-wide A72. ARM is always looking for ways to improve IPC (Instructions Per Cycle), and it noted that while running SPECint 2006 the A73 achieves an IPC of roughly 1.2 overall, increasing to 1.6 to 1.8 at specific sections within the test and dipping to 0.4 to 0.6 in others. Even much larger CPUs achieve an average IPC of just over 2. This does not mean you only need a 2-wide decoder, however, because there are situations that require greater throughput. For example, after a branch mispredict that requires a pipeline flush—which may occur 2-4 times for every 1000 instructions—the CPU needs to refill the issue queues as fast as possible so it can begin extracting ILP. So going wider helps throughput when you need a sudden burst of instructions. There’s a power and area penalty for going wider of course, because it causes a ripple effect through the rest of the pipeline, but it was clear to ARM that moving to 3-way superscalar was necessary to meet its IPC goals.

The A75’s Rename and Dispatch stages are similar to the A73’s. Like the A73 and other Sophia CPUs, there’s no reorder buffer or architectural register file in the A75. Instead it uses a physical register file for storing µop operands, reducing power by limiting the amount of data moving around the CPU and eliminating some instruction window bottlenecks that arise from using a reorder buffer.

The A75 does see some optimizations here, including the ability for loads to bypass writes, improving the core’s ability to execute out of order and better cope with an L2 cache miss. ARM also found that certain instructions that get cracked during the decode stage (because they need access to the register file during rename) were using too many entries in the A73’s issue queues, so the A75 now recombines these back into a single instruction after the rename stage, freeing up space in the issue queues for other µops.

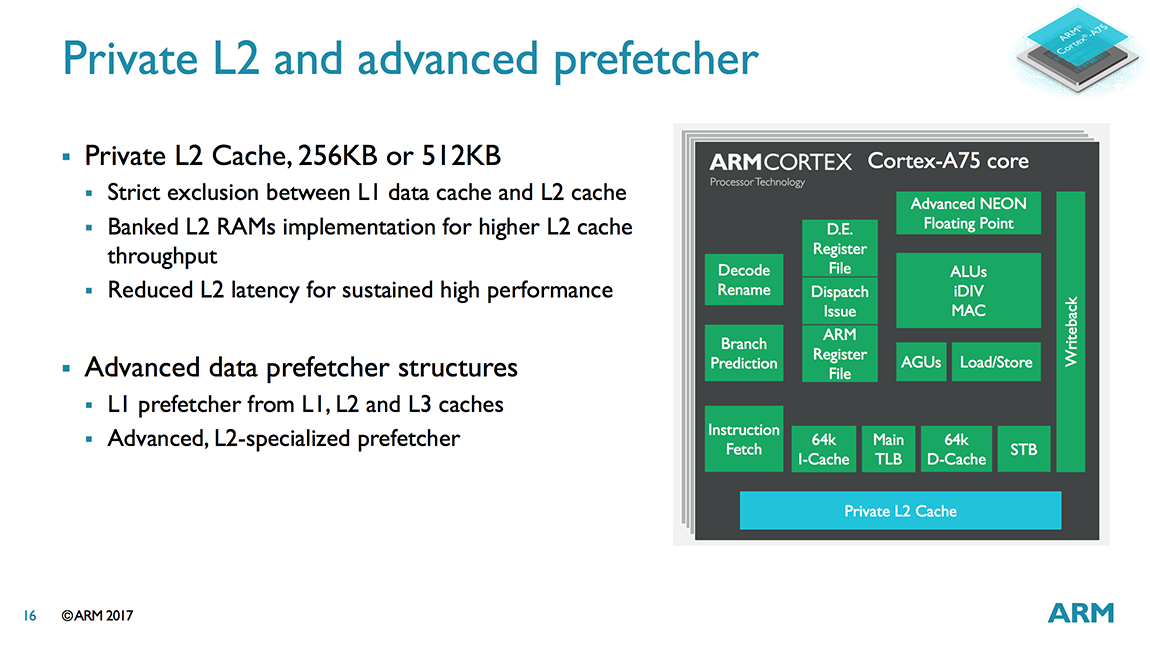

Moving over to the data path, we find an improved data prefetcher. The L1 and L2 prefetchers were already overhauled for A73, but the stride prefetcher has been retuned to better handle out of order execution for A75.

The 64KB L1 D-cache carries over nearly unchanged from A73. This is VIPT like the L1 I-cache, which reduces latency by performing the cache index lookup in parallel with the TLB translation. The A73/A75 handle aliasing issues, where several virtual addresses might reference the same physical address, in hardware, making the 4-way set associative VIPT cache look like a PIPT 8-way 32kB or 16-way 64KB cache to the programmer.

The A75 gets an integrated L2 cache that operates at core speed, reducing latency by more than 50% compared to the A73 that shares an L2 cache with the other CPUs in the same cluster. For instruction fetch, latency drops from 20-25 cycles to 11 cycles (10 cycle for a L1 miss, L2 hit), and for the lowest latency scenario (a load that forwards to the AGU because of a dependent load address) latency drops from 19 cycles to 8 cycles.

The optional L2 cache can be either 256KB or 512KB. Choosing the 512KB option only improves performance by about 2% compared to 256KB for a single core, but provides a better 4-5% uplift when using 4 A75 cores with DynamIQ. The L1 D-cache and L2 are now fully-exclusive instead of pseudo-exclusive like A73, which saves area because data is not duplicated in the L2 cache. The L1 I-cache is pseudo-inclusive.

ARM improved the overall L2 hit rate by biasing the L2 cache replacement policy to have a higher affinity for instructions. The L2’s higher hit rate and lower latency improves performance but also saves power and area by allowing the A75 to continue using a very simple instruction prefetcher.

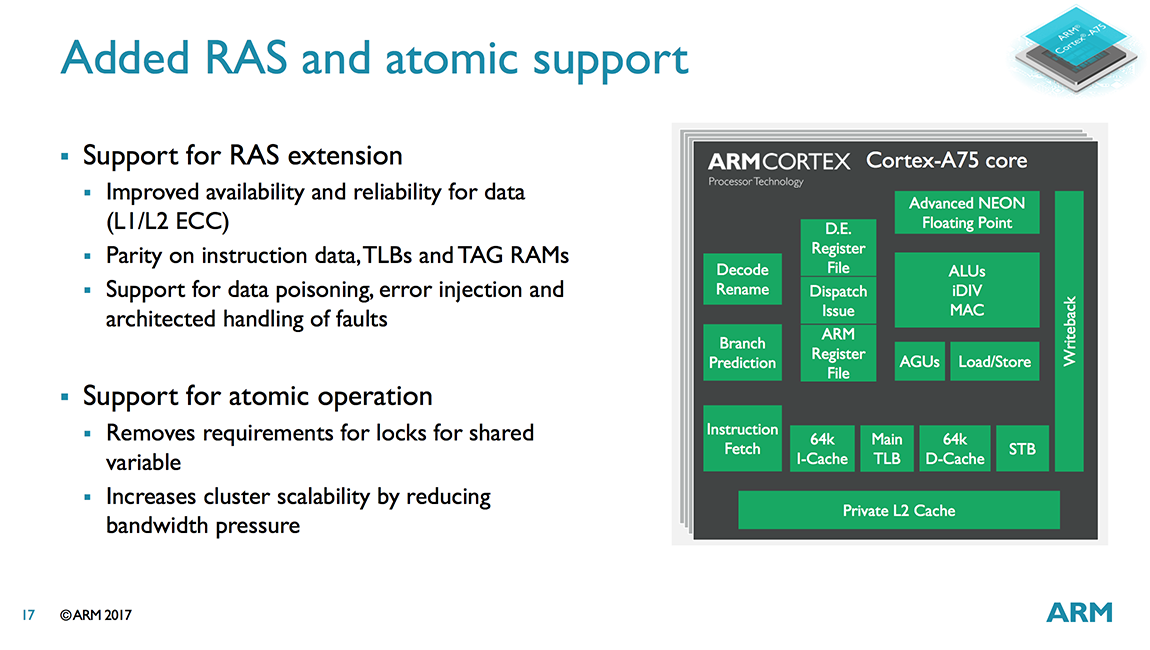

The A75’s main TLB is now non-blocking with a two outstanding fetch capability including hit under miss (the A73 main TLB is a blocking design). This change improves performance when there’s a TLB miss requiring a page table walk in main system memory. With a non-blocking TLB, it can continue to process translation requests while waiting for the page table walk to complete, which takes a comparatively long time because it requires multiple memory accesses.

Our trip through the data side of the A75’s memory system ends with the AGUs (Address Generation Units). Another carry over from the A73, the two AGUs are capable of performing both loads and stores, offering greater flexibility and a higher issue rate into the memory system. The size of the store buffer (STB), where all stores are pushed once they’ve been committed and are no longer speculative, increases to 7 128-bit slots.

Now it’s time to shift our focus to the execution pipelines. The A75’s ALU/INT pipes are the same as A73. Both ALUs can perform basic operations such as additions and shifts, but only one ALU handles integer multiplication and multiply-accumulate operations, while the other focuses on integer division with a Radix-16 divider. This means the A73/A75 cannot perform two integer multiplies or divides in parallel, but it can dual issue a MUL/MAC alongside a divide/add/shift. While nearly all instructions complete in 1 or 2 cycles, more complex integer multiplication and division operations require additional cycles. It’s interesting that after making the move to 3-wide decode, ARM considered adding a third ALU/INT pipe; however, the performance increase was not enough to justify the increase in power.

The 2 64-bit NEON/Floating Point pipes have their own dedicated Rename stage and 128-bit register file, with each SIMD NEON pipe in the A73/A75 capable of performing 8 8-bit integer, 4 16-bit integer, 2 32-bit integer or single-precision floating-point (FP), or 1 64-bit integer or double-precision FP operations per cycle, giving programmers the flexibility to choose the right balance between precision and performance.

The A75 also gains native support for half-precision FP16 operations by updating to the ARMv8.2 architecture. Using less precise data types (16-bits for FP16 versus 32- or 64-bits) reduces the amount of memory/cache required to store data and improves memory bandwidth, which can be a desirable trade-off for certain applications like machine learning and image processing. The A73 and earlier big cores could fetch FP16 values, but they need to be converted to FP32 before execution, resulting in some additional overhead.

Looking to improve performance, many neural-network algorithms are dropping down to 8-bit precision, especially after training is complete. To speed up these algorithms, the A75 (courtesy of the ARMv8.2 architecture) includes a new INT8 dot product instruction, which combines multiple instructions that required being executed back to back to back into a single instruction, significantly improving latency.

Starting with the A73 microarchitecture, ARM worked to improve IPC by moving to 3-wide decode and improving the core’s out-of-order capability, while DynamIQ support means a higher performing integrated L2 cache backed by a new L3 cache. The ARMv8.2 architecture also provides new features and new NEON instructions for accelerating neural networks and image processing.

104 Comments

View All Comments

lilmoe - Monday, May 29, 2017 - link

All of ARM's hope of having any significant, mainstream, server market share was demolished with the announcement of Naples.Wilco1 - Monday, May 29, 2017 - link

Check https://community.arm.com/processors/b/blog/posts/... - at the end there is a whole section on how much faster Cortex-A75 is compared to Cortex-A72 on SPECrate in big server designs. And Cortex-A55 also has all the RAS features required for servers.lilmoe - Monday, May 29, 2017 - link

It's about damn time they're addressing the little cores. We might not get anything better until they go fully OoO on both clusters with 7nm and beyond.Meteor2 - Monday, May 29, 2017 - link

In-order is more efficient, and that's important for 'workhorse' cores, whatever the node. Why waste energy when you don't need to? Like A53, A55 is about doing enough work quick enough, not doing as much as possible as fast as possible. (I think A35 was too weedy for life outside of IoT.)Plus I suspect that the ARM ISA is suited to in-order.

lilmoe - Monday, May 29, 2017 - link

Energy consumption goes down with each process node advancement. The A53 is spending most of its compute time well over 1.2ghz on flagship SoCs. At 1.5ghz and beyond, it starts wasting more energy than the big cluster at similar performance points. Your point is absolutely correct in an "ideal workload", but the real world is far from that with higher resolution screens and all the other crazy peripherals OEMs employ. We'll have to wait and see the performance energy curve first before determining the sweet spot of the a55 vs where most of the actual workload resides.Meteor2 - Friday, June 2, 2017 - link

Haven't seen an energy/performance curve on Anandtech for ages :(nandnandnand - Monday, May 29, 2017 - link

Low tier tech news outlets are saying the A75 has 50% more performance and that the A55 is 2.5x more power efficient:Compare: https://venturebeat.com/2017/05/28/arm-wants-to-bo... which acknowledges "in some use cases"

To: http://www.zdnet.com/article/arm-launches-new-cort... which just says "up to"

50% figure comes from comparing A73 at 2.4 GHz to A75 at 3.0 GHz: https://www.theregister.co.uk/2017/05/29/arm_cpus_...

jjj - Monday, May 29, 2017 - link

The 2.5x claim was on an ARM slide but it included process shrinks.The 50% claim seems to be at 2W per core not 3GHz but even then it clearly isn't in SPEC,maybe in Geekbench it gets close to that gain at 2W.

Krysto - Monday, May 29, 2017 - link

I wouldn't be surprised if we do see some configurations at 7nm like that (3GHz). ARM has always tended to give pretty unrealistic clock speed peak performance for its cores, which no chip maker actually ended up implementing, but I think this time it may be different due to Chromebooks and Windows 10 on ARM, where a 15W TDP or even higher is perfectly fine, as long as you also get good performance/W (compared to Intel/AMD).However, chip makers and OEMs will also have to take into account Windows 10 on ARM emulation, so they'll need to keep those chips at least 10% lower TDP/power consumption than the Intel/AMD competitors at those levels of performance.

jjj - Monday, May 29, 2017 - link

A75 is not aimed at 7nm, that's where the next core comes in.